Sony AI’s Project Ace is the first robot to beat elite human players in a competitive physical sport — under official rules, with licensed referees, and on a real table.

Table tennis has hosted some of AI’s most celebrated milestones. For more than four decades, roboticists have tried to build a machine fast enough, precise enough, and smart enough to rally with elite human players. None succeeded. Furthermore, the challenge was not just physical speed — it was the combination of split-second perception, real-time decision-making, and adaptive strategy that elite players master through years of practice.

On 22 April 2026, Sony AI published a study in Nature that changes that story. A robot called Ace — developed over five years by Sony AI’s team in Zürich — defeated elite and professional table tennis players in official matches. Furthermore, the results followed International Table Tennis Federation (ITTF) rules. Licensed officials from the Japan Table Tennis Association refereed every match. Consequently, this is not a demonstration or a controlled lab experiment. Ace is the first AI system to beat expert human players in a competitive physical sport under real conditions.

What’s Happening & Why It Matters

Five Years in the Making: What Is Project Ace?

Sony AI began Project Ace in 2020 — one of the laboratory’s very first research efforts. At that point, the team had no office, no large workforce, and a humble starting goal. “The beginnings of the project were really humble,” said Michael Spranger, President of Sony AI. “It started with juggling the ball. Then, cooperative rallies where a human works with the robot to keep the rally going. From there, we moved to playing against increasingly stronger table tennis players.”

Furthermore, the project spent five years progressing through carefully designed milestones. Each milestone built on the last. Safety was the priority. In early testing, players wore helmets, pads, and glasses. Only after rigorous safety validation did the team remove protective gear. “For the first time at this speed, robots are actually interacting with humans,” Spranger noted. Consequently, Ace required not just engineering breakthroughs — it required a framework for human-robot interaction at speeds previously considered impossible.

Peter Dürr, Sony AI Director and project lead, framed the significance plainly. “This research has shown that an autonomous robot can, in fact, win at a competitive sport, matching or exceeding the reaction time and decision-making of humans in a physical space. Table tennis requires split-second decisions, speed, and power. This represents a significant step toward creating robots with applications in fast, precise, and real-time human interactions.”

The Hardware: Built to Move at Inhuman Speed

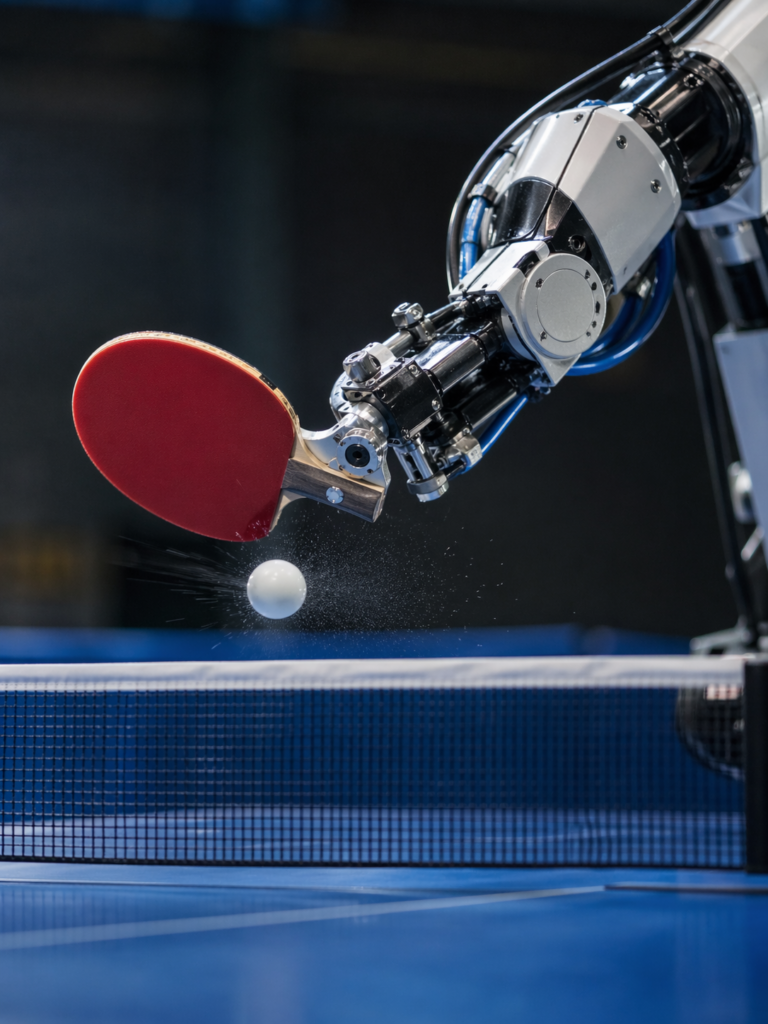

Ace is built around a custom eight-degree-of-freedom robotic arm, manufactured using optimised lightweight alloys. The arm delivers human-level reach and acceleration while safe to operate near people. Furthermore, the arm runs on a track system that allows horizontal repositioning during play. This gives Ace the spatial coverage needed to reach wide shots.

The perception system is where Ace truly separates itself. Furthermore, it uses a hybrid approach that no previous robot table tennis system had attempted at this scale. Ace integrates nine active pixel sensor cameras that track the ball’s three-dimensional position in real time. Additionally, three gaze-control systems use event-based vision sensors, pan-and-tilt mirrors, and telephoto tunable lenses. These measure the ball’s angular velocity and spin at up to 700 Hz — fast enough to capture motion completely invisible to the human eye.

Event-based sensors differ fundamentally from conventional cameras. Traditional cameras take rapid, successive photographs. Event-based sensors fire only when something changes in the visual field. Consequently, they produce extremely low-latency data on moving objects. Together, the nine frame cameras and three event-based systems give Ace an end-to-end latency of just 20.2 milliseconds — compared to roughly 230 milliseconds for an elite human player. Furthermore, this means Ace perceives and begins responding to the ball’s flight approximately 11 times faster than the best human players in the world.

The AI: Three Layers of Learning

Ace’s intelligence operates across three distinct layers. Furthermore, each layer governs a different timescale of decision-making.

The first layer is Skill — the ability to move joints rapidly and generate specific spin, power, and shot direction in real time. This layer governs every individual contact between racket and ball. The second layer is Tactics — how Ace responds within a rally, deciding shot placement and pace relative to the opponent’s position. The third layer is Strategy — how Ace’s decisions evolve across a full match. Sony AI Chief Scientist Peter Stone described the current state directly. “Our emphasis for this Nature paper is on the Skill, and that’s where most of the reinforcement learning is. There’s still room for improvement at the tactics and strategy level.”

Furthermore, Ace’s control system is based on model-free reinforcement learning — meaning it learns entirely from experience rather than following pre-programmed rules. The entire skill training process takes place in a simulation. Ace’s AI plays against itself millions of times in a virtual environment. Then the trained policies transfer directly to the physical robot. Additionally, the team used a technique called a privileged critic during training. In simulation, this component has access to perfect information — ball position, spin, and velocity — that no real sensor could ever provide. However, the policy itself learns only from realistic sensor inputs.

Dürr described the result of this approach with visible surprise. “It totally blew my mind,” he said. “I didn’t think this was possible at all — but with this kind of privileged information fed to the critic, it turns out the policy can learn how to do sensor fusion and anticipate the trajectory of a table-tennis ball.” Consequently, Ace teaches itself to predict where the ball will be before it arrives — not because it was told how, but because it generalised from better-informed training signals.

The Results: Elite Players Beaten Under Official Rules

For the results published in Nature, Ace competed against five elite players and two professional players. Furthermore, all matches followed full ITTF regulations. Licensed umpires from the Japan Table Tennis Association officiated. Ace achieved three victories in five matches against the elite player group. Additionally, competitive performances in the matches demonstrated that Ace was not simply winning on lucky shots — it was sustaining extended rallies and adapting to each opponent.

Ace achieved a return rate above 75% for spins up to 450 radians per second. Furthermore, Ace demonstrated a particularly striking capability against unusual shots. When balls bounced off the net — an edge case that is extremely difficult to model in simulation — Ace adapted in real time. This illustrates genuine generalisation beyond training data. Additionally, after the Nature manuscript was submitted, the team continued testing through December 2025 and March 2026. In those additional matches, Ace beat professional players. Furthermore, compared with earlier evaluations, Ace demonstrated higher shot speeds, more aggressive placement near the table edge, and faster rally pacing. Consequently, the system continues to improve under competitive conditions.

Beyond Table Tennis

Sony AI positions Ace within a lineage of AI milestones. Deep Blue defeated Kasparov at chess in 1998. In 2016, AlphaGo mastered intuition in Go. Sony AI‘s own GT Sophy achieved superhuman control in the racing simulator Gran Turismo in 2022. Furthermore, Ace extends that lineage from digital domains into the physical world. “This is bigger than table tennis,” said Stone. “It takes its place in a series of AI landmarks. It’s the very first time there’s been a human expert-level demonstration of competitive play in the real world across any sport — not just table tennis.”

Furthermore, Spranger articulated the project’s deeper purpose. “We wanted to prove that AI doesn’t just exist in virtual spaces. It’s not just tech you interact with in the virtual world — you can actually have a physical experience, and the technology is ready for that.” Consequently, the implications extend far beyond sport. A robot that perceives, decides, and acts at millisecond timescales in unpredictable human environments is also a robot that can perform precision tasks in homes, hospitals, warehouses, and factories.

The Human Element

Interestingly, the professional players did not respond to Ace with fear or hostility. Japanese professional player Mayuka Taira competed against Ace in official matches in Tokyo. Furthermore, Taira is known for reaching the women’s singles final at the 2019 US Open Table Tennis Championships. Her matches against Ace generated extended rallies and competitive exchanges. Dürr watched one of Ace’s returns during a match against Taira with visible excitement. “Yes!” he exclaimed after Ace returned a smash that most robots — and many humans — would have missed entirely. Additionally, the project involved elite players, coaches, past Olympians, and industry experts throughout the evaluation and match design process. Consequently, Ace was not tested in isolation from the table tennis community — it was validated by it.

TF Summary: What’s Next

Sony AI has confirmed that Project Ace will continue developing its tactical and strategic layers. Furthermore, the team acknowledges that these higher-level decision-making capabilities are the next performance frontier. Stone noted that Skill is largely solved at the elite level. Tactics and match strategy represent the gap between Ace and the very best professional players globally. Additionally, Sony AI views the event-based perception approach, the simulation-to-real transfer methodology, and the three-layer learning architecture as broadly applicable techniques. Consequently, Ace’s underlying technology is not designed to stay on a table tennis court. It is designed to transfer to any environment where fast, precise, and safe physical AI matters.

MY FORECAST: Furthermore, the Nature publication opens the door for other robotics research groups to build on Ace’s architecture. The combination of event-based sensing, model-free reinforcement learning, and simulation-first training represents a blueprint that the field can adopt. Consequently, the question is no longer whether AI can compete with elite humans in a fast physical sport. Ace has answered that definitively. The next question is how quickly these capabilities spread from the table to the rest of the world.

— Text-to-Speech (TTS) provided by gspeech | TechFyle