YouTube Opens a New Escape Hatch for Public Figures, but Satire Still Keeps a Hall Pass

Deepfakes keep getting cheaper, quicker, and nastier. That was already obvious. The platforms are finally acting like they noticed the house is on fire.

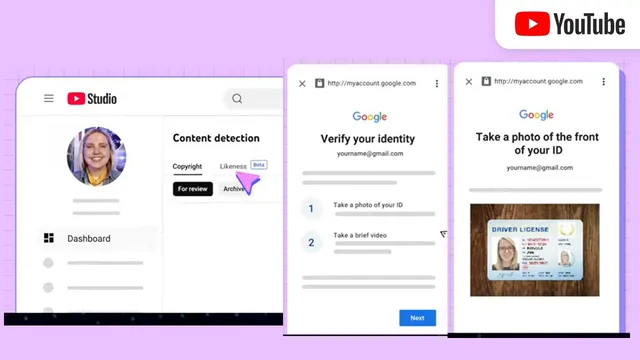

YouTube is expanding its AI Likeness Detection tool so that political candidates, government officials, and journalists can request the removal of videos that manipulate or fabricate their faces with artificial intelligence. The company is starting with a pilot group and baking identity verification into the process using government-issued ID and a selfie video.

That sounds sensible. It also sounds late.

What’s Happening & Why This Matters

YouTube’s AI Likeness Detection Program

YouTube launched the underlying tool last year inside YouTube Studio. It lets people identify videos in which their faces appear to be altered or generated using AI. To join the program, a channel owner or manager must verify identity with a government-issued ID and a short selfie video. After that, YouTube searches for videos using the person’s likeness and surfaces those clips under the Content detection tab in YouTube Studio. From there, the user can review individual videos and request the removal of one, several, or all manipulative clips.

The update widens access to a more sensitive class of users: politicians, public officials, and journalists. That choice matters because those groups are near the blast zone of modern misinformation. Politicians can get smeared through fake speeches. Journalists can get discredited through synthetic clips designed to make them look biased, unethical, or ridiculous. Government officials can get impersonated in ways that trigger public confusion or even security concerns.

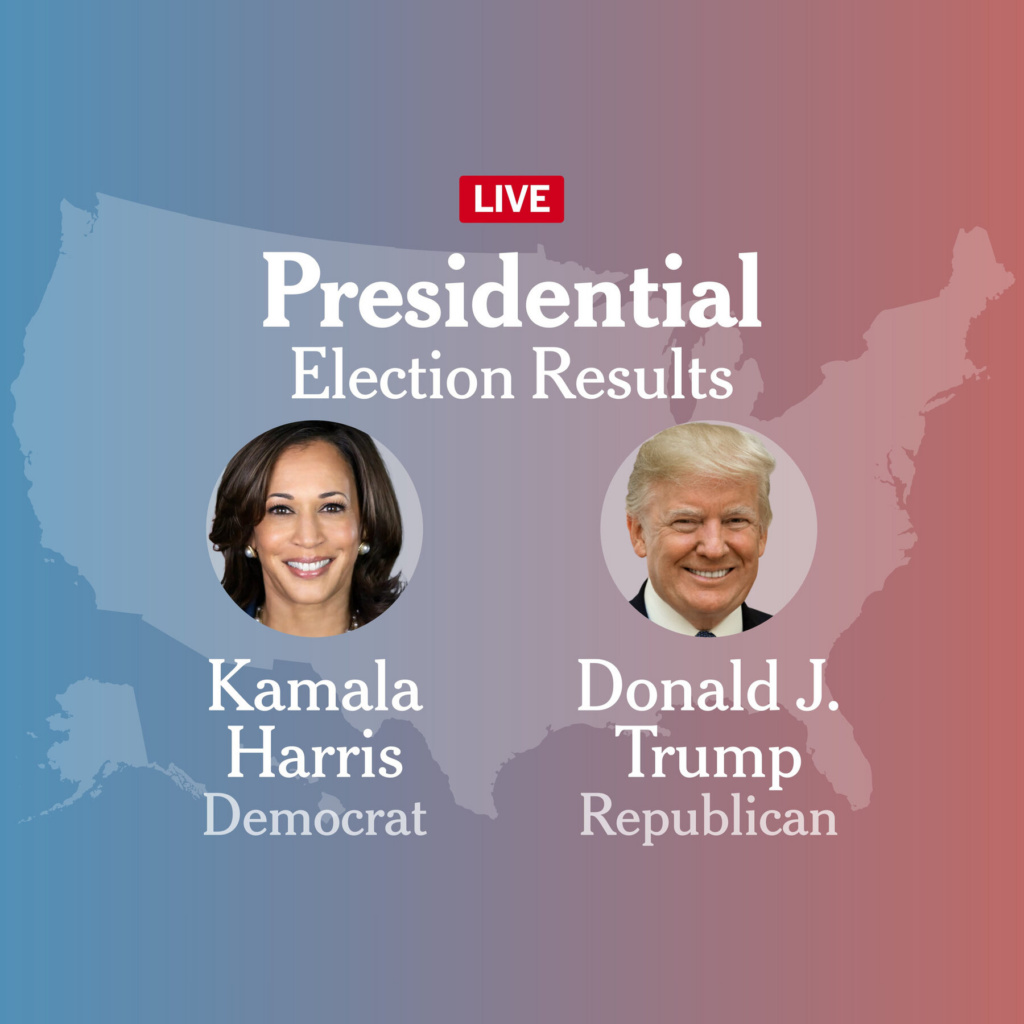

This is not theoretical. The file notes that AI deepfakes were a major issue during the 2024 U.S. presidential election. Reports indicate that foreign governments tried to manipulate voters using fake Kamala Harris videos, while fake celebrity endorsements circulated by President Donald Trump.

That context explains why YouTube is moving here. The company is not only solving a product problem. It is trying to avoid becoming a permanent archive of machine-generated political poison.

Verification Is the Gatekeeper

One subtle but important point: the tool does not automatically wipe videos just because a face appears inside them. The first gate is identity verification, not automatic takedown. Users must prove they are who they say they are before YouTube will even start scanning for manipulated likenesses.

That is a practical move. Without a verification step, malicious users could weaponise the removal system against critics, creators, or rivals. The government ID plus selfie requirement is clearly designed to stop fake complaints from flooding the system.

Still, this raises its own tension. Platforms want to fight AI deception while collecting more sensitive identity data from users. The anti-deepfake fix starts to look a little like a surveillance funnel if you squint too hard. That does not make the system wrong. It does make the privacy tradeoff real.

This is the sort of strange little tax the AI era keeps imposing: to protect your likeness, first upload your face.

Public Figures Get Early Access

YouTube says the expansion will begin with a pilot group of unnamed journalists, candidates, and officials. That pilot-first approach is classic platform behaviour. Roll out slowly. Watch what breaks. Patch the ugly parts. Avoid promising the moon when you barely trust the ladder.

The reason public figures are first in line is obvious. Deepfake harm scales quickly around them.

A fake clip of a musician can be embarrassing. A fake clip of a mayor during a riot, a candidate before an election, or a journalist during a live geopolitical crisis can travel like gasoline on a hot sidewalk. The damage is not only reputational. It can be political, financial, legal, and social.

Public figures tend to face more frequent targeting. Their faces are easier to source, their voices are easier to model, and their online visibility makes distribution easier. Deepfake producers love famous faces because they come preloaded with audience attention.

That makes YouTube’s move feel less generous than defensive. The platform knows the users most likely to bring lawyers, regulators, and press pressure if nothing changes.

YouTube Still Wants to Protect Satire and Parody

Here is where the clean narrative gets muddy.

YouTube says it will not remove every AI-generated or AI-manipulated video. The company explicitly says it will continue to protect free expression and allow parody and satirical content, even when that content criticises world leaders and influential figures. It says it will evaluate those exceptions carefully when removal requests come in.

That is a reasonable policy in principle. Satire matters. Political mockery matters. A platform that deletes every joke at the request of a powerful person is a highly polished censorship machine.

But the line between parody and manipulation is not always clean. A silly impression is one thing. A hyper-realistic fake speech timed to spread during a voting window is another. A satirical sketch signals itself as humour. A deliberately misleading deepfake often pretends to be authentic long enough to do damage.

YouTube is in a nasty middle seat. If it leans too hard toward removal, critics will say it protects elites from ridicule. If it leans too hard toward expression, critics will say it lets synthetic lies sp”ead under a “just kidding” fig leaf.

And because this is the internet, both groups will probably scream simultaneously.

Journalism Is a Smart but Tricky Addition

Including journalists in the pilot is one of the smartest parts of the rollout.

Journalists increasingly work in hostile information environments. A fake clip can be used toreporter’s a reporter’s neutrality, fabricate offensive remarks, or create the appearance of misconduct. In conflict zones, that kind of manipulation can put real people at risk. A journalist with a synthetic face or voice attached to the wrong statement can quickly lose trust, access, or safety.

So yes, protecting journalists makes sense.

It poses a practical issue. Journalism sometimes relies on satire, reenactment, documentary montage, or visual experimentation. Platforms will need to distinguish between malicious deepfakes targeting journalists and legitimate editorial or artistic uses that happen to include manipulated imagery. That is not impossible. It is not simple.

The hard part of deepfake moderation is not catching obvious garbage. The hard part is handling edge cases without looking either clueless or authoritarian.

The 2024 Election Changed file’sod

The file’s reference to the 2024 U.S. election matters because it captures the mood shift. Deepfake” moved from “we should keep a” eye”on this” to “this can distort democratic life “n real time.” Reports of foreign actors circulating fake videos of Harris and fabricated celebrity endorsements tied to Trump showed that synthetic media is no longer an oddity hiding in forums. It is a campaign weapon.

That matters because platforms tend to move only after risk is too visible to ignore. Elections make the risk visible. So do wars. So do celebrity scandals. So do cases where fake media reaches millions before any label or takedownYouTube’sup.

YouTube’s choice should be read in that context. The company is responding to a media environment in which manipulated likenesses are part of the expected chaos surrounding politics and public life.

This is not the end of that chaos. It is the platform admitting the problem is real enough to warrant tooling.

The Tool Does Not Solve the Core Problem

A likeness-removal tool is useful, but it is not magic.

It helps after a fake appears. It does not stop creation. It does not stop reposts on other platforms. It does not stop a bad actor from distributing the clip elsewhere before the request is processed. It does not f”liar’sx the “li”r’s dividend” problem, where real footage gets dismissed as fake by people who find the truth inconvenient.

That is the maddening thing about deepfakes. The presence of synthetic media not only creates false content. It weakens trust in authenticYouTube’s

So YouTube’s program is best understood as damage control, not prevention.

That still matters. Damage control is better than passive shrugging. But the public should not mistake a detection tab for a durable solution to AI deception.

This Is a Preview of the Rules to Come

The significance of the rollout is regulatory. Platforms are slowly building the controls they think lawmakers will eventually demand. Identity verification, likeness scanning, exception handling, removal workflows, and category-specific access all look like the early bones of future compliance frameworks.

Today, it is a pilot for politicians and journalists. Tomorrow, it may extend to celebrities, executives, teachers, doctors, activists, or private citizens. Once the infrastructure exists, the eligibility debate expands naturally.

The deeper question is not whether more groups will want access. Of course they will. The deeper question is whether platforms can scale the review process without turning it into either a bottleneck or a mess.

Because if the system works poorly, it will fail in the exact moments that matter most: fast news cycles, elections, scandals, and crises.

TF Summary: What’s Next

YouTube is expanding its AI Likeness Detection tool so political candidates, government officials, and journalists can request the removal of manipulated deepfake videos. The process uses government ID and selfie verification, scans YouTube for altered likenesses, and lets eligible users request takedowns through YouTube Studio. Yet YouTube is also preserving room for parody and satire, which means each request may is a judgment call rather than an automatic deletion.

MY FORECAST: The pilot will grow. More user categories will demand access, especially as deepfakes get better, cheaper, and nastier. YouTube will likely keep refining a two-track system: stronger removal tools for clearly deceptive likeness abuse, paired with carve-outs for obvious satire and commentary. Regulators will watch closely,platform’she platform’s success or failure here will shape what lawmakers expect from every other major video service facing the same synthetic-media flood.

— Text-to-Speech (TTS) provided by gspeech | TechFyle