A Safety Dispute Turns Into A Federal Denylist Fight — And Every AI Vendor Gets The Message.

Washington loves a label. “Supply chain risk” is one of the nastiest ones in the toolbox. It usually targets foreign adversary-linked tech. This week, the Pentagon slaps it on a U.S. AI company: Anthropic.

The trigger is not a breach. It is a standoff over guardrails.

Anthropic refuses to loosen two specific restrictions on its model, Claude: no mass domestic surveillance and no fully autonomous weapons. The Pentagon pushes for “all lawful uses.” Anthropic says no. Then the Department of Defense (DoD) — rebranded by President Donald Trump as the “Department of War” in administration messaging — moves to designate Anthropic as a supply chain risk “effective immediately.”

Anthropic responds with a legal threat, a public clarification, and a calm warning: the decision establishes a dangerous precedent. It also invites a practical question for every enterprise customer: if the government can weaponise a procurement label against a domestic vendor during contract negotiations, who is safe next?

What’s Happening & Why This Matters

The Pentagon Pulls A Rare Trigger

A senior Pentagon official confirms the department has officially notified Anthropic leadership that the company and its products are “deemed a supply chain risk, effective immediately.”

That phrasing matters. “Supply chain risk” carries the weight of national security. It signals to contractors, integrators, and procurement teams that using the product in defence work could jeopardise contracts.

War Secretary Pete Hegseth previously posted that no military contractor or supplier “may conduct any commercial activity with Anthropic” under the designation. Anthropic argues that the actual written notification has a narrower scope than the public rhetoric implies.

This is the heart of the fight: the public threat sounds like a sweeping denylist. Anthropic claims the legal instrument can’t stretch that far.

Anthropic: The Ban Is Narrower Than The Headline

Dario Amodei, Anthropic’s CEO, says the Pentagon’s letter means contractors are only barred from using Claude “as a direct part of contracts with the Department of War,” not barred from using Claude across all other business lines simply because they also hold defence contracts.

Amodei anchors the argument in supply chain law, stating the Secretary of War must use the “least restrictive means necessary” to protect the supply chain. He says the designation does not — and cannot — limit uses unrelated to specific defence contracts.

This distinction is not academic. Many enterprises sell to the Pentagon while also operating huge commercial lines. If “any commercial activity” were enforced literally, it would force contractors to choose between U.S. security work and using Anthropic tools across their entire business. That would be a procurement earthquake.

Anthropic’s position attempts to keep it from becoming a global business freeze.

Microsoft: Claude Is Available Outside Defence Work

Microsoft effectively backs Anthropic’s interpretation.

A Microsoft spokesperson says its lawyers studied the designation and concluded Anthropic products, including Claude, can stay available to customers — excluding the Department of War — through Microsoft platforms such as M365, GitHub, and Microsoft’s AI Foundry. Microsoft also says it can continue non-defence-related work with Anthropic.

That statement carries weight. Microsoft is a major distribution channel for AI services. If Microsoft keeps embedding Claude for non-defence customers, the “blacklist” narrative changes from existential to targeted — still damaging, still precedent-setting, yet not instantly fatal.

The Pentagon has not issued a public clarification matching Microsoft’s language. That ambiguity leaves enterprises to read between the lines and rely on lawyers.

The Pentagon’s Core Demand: “All Lawful Uses”

The Pentagon phrases the dispute as a chain-of-command issue.

A senior Pentagon official says the department will not allow a vendor to “insert itself into the chain of command” by restricting the lawful use of a critical capability, and warns that such restrictions could put warfighters at risk.

Anthropic says its two restrictions are narrow and are at a high level: mass domestic surveillance and fully autonomous weapons. Amodei says exceptions do not interfere with operational decision-making and stresses that Anthropic aims to prevent misuse categories that carry severe civic risk.

This is a philosophical clash, dressed as contract language.

The Pentagon wants discretion. Anthropic wants boundaries that constrain discretion.

Contractors’ Reaction: Lockheed Moves, Others Recalculate

Defence contractors respond quickly.

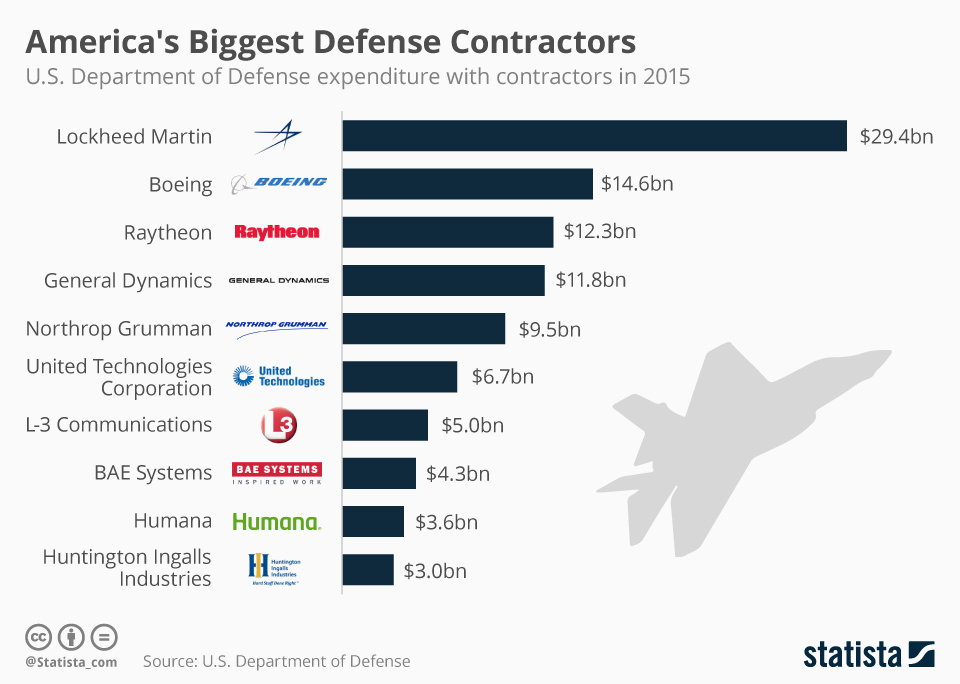

Lockheed Martin says it will follow the direction of the President and the Department of War and look to other large language model providers. It adds that it does not depend on any single vendor for its work.

That is the contractor playbook: diversify vendors, avoid being a hostage to a single supplier, and stay aligned with procurement guidance.

This also reveals a new reality. “Model portability” is not a nice-to-have. It’s a survival skill. Contractors who can swap model providers with minimal rework will win bids and dodge disruptions.

OpenAI Fills The Gap — And the Industry Splits

The dispute deepens a rivalry that already runs hot.

In the wake of Anthropic’s punishment, OpenAI announces a deal to support classified military environments, positioning itself as the replacement provider.

OpenAI CEO Sam Altman even says Anthropic should not be labelled a supply chain risk. That is not charity. That is the industry quietly warning Washington that today’s target is tomorrow’s supplier crisis.

Also, political leaders from both parties criticise the Pentagon’s move. Senator Kirsten Gillibrand calls the designation “shortsighted, self-destructive, and a gift to our adversaries,” warning that attacking an American company for refusing to compromise safety measures resembles tactics expected from authoritarian states, not the United States.

A group of former defence and national security officials writes to Congress, urging an investigation into a “dangerous precedent,” noting that the tool is intended to protect against infiltration by foreign adversaries, not to penalise domestic firms over safeguards tied to surveillance and autonomous weapons.

That is a rare alignment: hawkish national security voices and civil liberties voices both calling foul, even if their reasons differ.

Anthropic Plans A Court Fight

Anthropic signals will challenge the designation.

Amodei says, “We do not believe this action is legally sound, and we see no choice but to challenge it in court.”

This sets up a legal battle with implications beyond one vendor. Courts may need to decide how much the Pentagon can apply supply chain risk authorities, and whether “least restrictive means” language constrains the department in practice.

If Anthropic wins, the designation tool weakens for domestic disputes. If the Pentagon wins, every AI vendor negotiating defence terms will treat procurement as political terrain, not just a matter of technical compliance.

Consumer Demand Surges While Washington Burns Bridges

The strangest twist: Anthropic reports a surge in consumer interest during the fallout.

The company says more than a million people signed up for Claude each day during the week of the dispute, placing it above other AI apps in many App Store rankings.

This creates an unusual split-screen moment. Government procurement treats Anthropic as a risk. Consumers treat Anthropic as a principled brand.

That tension won’t resolve quickly. It will shape hiring, partnerships, and enterprise adoption decisions.

TF Summary: What’s Next

The Pentagon’s supply chain risk designation against Anthropic escalates a contract dispute into a national security confrontation. Anthropic argues the practical scope is narrow and says contractors can still use Claude outside direct defence contract work, a view Microsoft supports. Yet the precedent is the bigger problem: Washington used a tool designed for foreign adversaries against a domestic AI vendor after the vendor refused to loosen guardrails around surveillance and autonomous weapons.

MY FORECAST: Anthropic will sue fast and fight publicly. Enterprises will demand clearer contract language, clearer audit trails, and stronger vendor portability plans. Defence contractors will keep multiple model providers “hot-swappable” inside their stacks. The Pentagon will keep identifying “all lawful uses,” and AI vendors will keep drafting red lines with sharper legal teeth. This episode won’t stay isolated. It will become the template for the next confrontation between AI governance and military procurement.

— Text-to-Speech (TTS) provided by gspeech | TechFyle