Age verification on social media platforms is expected to be standard practice soon.

Discord built its empire on a simple promise: hang out, talk freely, and don’t feel the Internet is peering over your shoulder. That promise ran headfirst into government pressure, child-safety activism, and a user base that treats privacy as a non-negotiable religion. The platform’s planned global age-verification rollout — pitched as a way to protect minors — has met with fierce backlash, forcing a delay.

At issue is a familiar digital conundrum. Lawmakers want stricter safeguards for children. Users demand less surveillance. Platforms get squeezed in the middle like a stress ball with venture funding. Discord, long marketed as a refuge for gamers and niche communities, suddenly finds itself cast as a gatekeeper expected to verify millions of identities without alienating the very people who made it popular.

In short, the digital community platform tried to install a bouncer at the door of the Internet’s most chaotic house party. Guests said, “No thanks!”

What’s Happening & Why This Matters

Safety Rules Meet Privacy Panic

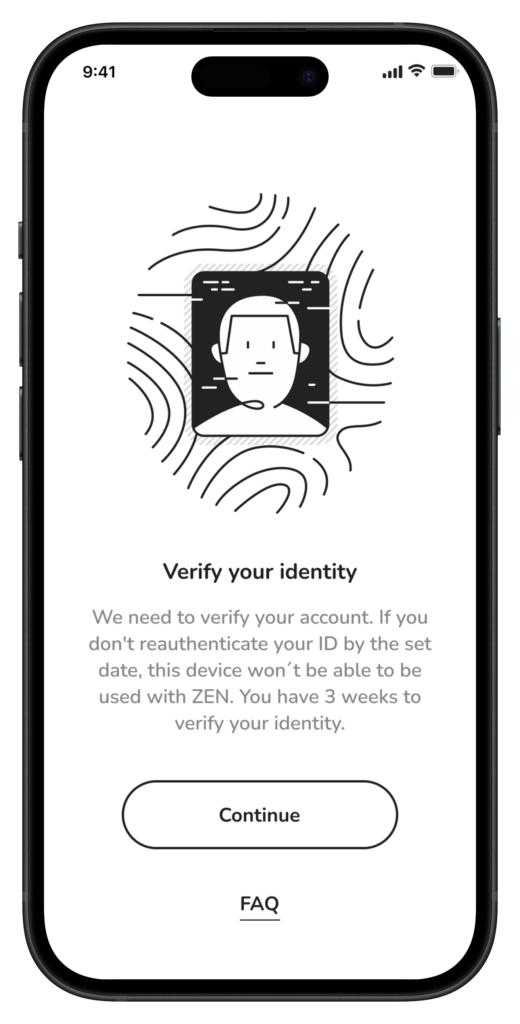

Discord planned a global age-verification system designed to ensure minors cannot access adult content or unsafe spaces. Governments across Europe, the U.K., and elsewhere are compelling platforms to verify users’ ages using official documentation, facial scans, or third-party verification tools. Regulators frame the requirement as common sense. Critics call it a digital ID system in disguise.

Users did not mince words. Many argued that handing over passports, driver’s licenses, or biometric data to a chat app feels excessive, intrusive, and risky. Others warned that centralised databases of identity data attract hackers like sugar attracts ants.

Discord acknowledged the concerns and paused the rollout. In a statement, the company said it wants to “get this right” before expanding the system worldwide. Translation from corporate dialect: the pitchforks came out.

Privacy advocates also sounded alarms. Organisations focused on digital rights warned that mandatory verification erodes anonymity, which is vital for activists, journalists, LGBTQ+ youth, and anyone living under restrictive regimes. When anonymity disappears, vulnerable people often disappear from public conversation, too.

Governments Turn Up the Heat

Pressure does not come from nowhere. Regulators increasingly hold platforms responsible for harm experienced by minors online. Several jurisdictions already impose or plan strict rules forcing companies to block underage users from adult content or risky interactions.

Officials argue that voluntary moderation has failed. Platforms, they say, allowed toxic communities, exploitation, and harmful material to spread too easily. Age verification offers a blunt but measurable tool. Either a company checks IDs, or it faces fines and legal action.

Supporters of stricter rules point to troubling data on teen mental health, online grooming, and exposure to harmful content. Critics counter that verification treats symptoms, not causes. A scanned ID does not fix harassment, misinformation, or addictive design.

The clash boils down to philosophy. Should the Internet operate like a public square with minimal barriers, or like a nightclub where entry requires proof of identity?

Discord’s Identity Crisis

Discord sits in an awkward position compared with traditional social networks. The platform thrives on private servers rather than public broadcasting. Communities often operate like digital living rooms. That intimacy makes heavy identity checks feel especially invasive.

For years, Discord leaned into its reputation as a space where pseudonyms rule and real names matter less. Gamers, creators, students, and hobbyists flocked to that model. Suddenly asking millions to verify identities feels like a reversal of core brand DNA.

Some users fear a slippery slope. If age checks arrive today, broader identity verification might follow tomorrow. The nightmare scenario involves tying every online action to a real-world identity — a dream for regulators and advertisers, a horror show for privacy purists.

Discord insists that the goal is to protect minors. Yet trust, once shaken, does not bounce back like a rubber ball. It behaves more like a dropped ceramic mug.

Limits Add Fuel

Even flawless intentions collide with messy reality. Age-verification tech is imperfect. Facial analysis struggles with accuracy across demographics. Document checks can fail or falsely reject legitimate users. Systems also create accessibility barriers for people without government IDs.

False positives present another headache. Imagine a 30-year-old locked out of a community because an algorithm decides they look under 18. Multiply that frustration by millions, and customer support teams start dreaming of early retirement.

Security risks loom large as well. Storing identity data creates a tempting target for cybercriminals. History offers plenty of cautionary tales. Major companies with vastly larger budgets struggle to prevent breaches. Users understandably hesitate to trust a chat platform with sensitive documents.

Stakes Behind the Drama

Advertisers and investors increasingly demand “brand-safe” environments. Platforms that host minors without robust safeguards risk reputational damage and regulatory fines. That pressure nudges companies toward stricter controls even when users resist.

At the same time, engagement drives revenue. Friction at signup or login reduces growth. Age verification adds friction with a capital F. Discord must balance compliance with user retention — a tightrope walk over a pit filled with lawyers and angry Reddit threads.

Competitors watch closely. If Discord succeeds without losing its community, others will follow. If the rollout sparks mass departures, platforms may rethink similar plans.

Cultural Fault Lines Online

The dispute reveals a deeper shift in how societies view the Internet. Early digital culture prized freedom and anonymity. Modern governance increasingly treats online spaces as extensions of real-world public life requiring regulation.

Young users grow up in a world where surveillance feels routine. Older users remember an internet that felt like the Wild West. Those generational differences shape reactions to identity checks.

Meanwhile, authoritarian governments observe the debate with interest. Tools built for child protection can also enable censorship and tracking if misused. Technology rarely comes with built-in moral guardrails.

TF Summary: What’s Next

Discord’s delay signals caution, not surrender. Age verification will return in some form as regulatory pressure continues to rise. The company must redesign its approach to minimise data collection, maximise transparency, and rebuild trust. Expect pilot programs, optional methods, or region-specific rollouts before any global launch.

MY FORECAST: The trajectory points toward a more controlled internet. Platforms, governments, and users will keep wrestling over where safety ends and surveillance begins. Discord’s predicament serves as a preview of battles coming to nearly every online service. The party will continue — but future guests may need to show ID at the door.

— Text-to-Speech (TTS) provided by gspeech | TechFyle