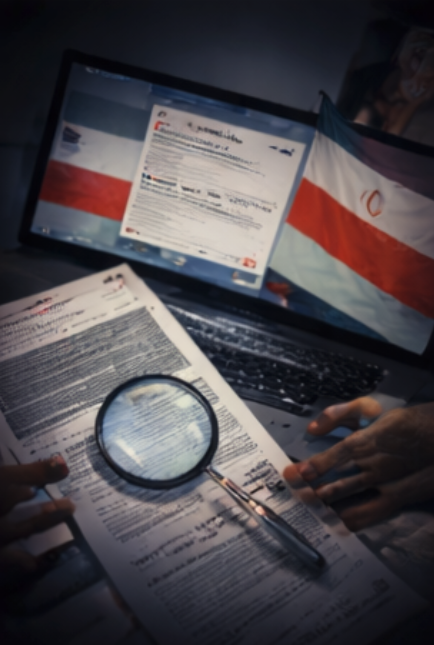

From Hungary’s fabricated elections to Booking.com’s breach and AI doctors recording patients — digital trust took a beating.

Three stories. Three separate industries. One uncomfortable pattern. The cybercrime and digital rights terrain features a Hungarian election marred by fabricated documents; a data breach at one of the world’s largest travel platforms; and a class-action lawsuit targeting two major US healthcare providers over AI tools that recorded patients without their knowledge. Together, these incidents map an escalating crisis of digital trust — one that no single regulator, platform, or courtroom can fully contain.

Week 16 of 2026 is starting to one thing obvious: whether you are casting a vote, booking a hotel room, or seeing your doctor, your personal data, privacy, and right to informed consent are under active, coordinated pressure.

The bad actors are organised. The tools are sophisticated. And the legal frameworks are struggling to keep up.

What’s Happening & Why It Matters

Hungary’s Election: A Disinformation Battleground

Hungary‘s parliamentary election on 12 April 2026 produced a landmark result — Péter Magyar’s Tisza party swept to a two-thirds majority of 138 seats in the 199-seat parliament, ending Viktor Orbán’s 16-year grip on power. But what preceded that result was a campaign drenched in manipulation, fabricated evidence, and coordinated digital suppression.

Disinformation researchers identified a new and brazen tactic: pro-government actors did not merely spin facts — they manufactured them. According to researchers at the European University Institute, Orbán’s Fidesz party created a forged party platform for Tisza. It leaked to Hungarian news site Index, which published a story claiming the opposition planned a major tax hike if elected. The document was entirely fabricated. It included absurd fake proposals — including a tax on cats and dogs — designed to be credible enough to damage and ridiculous enough to humiliate. Tisza subsequently filed 13 press lawsuits against Index and other outlets for publishing the forgery.

“What I think is different is that now the government is going beyond propaganda and is also creating its own facts on the ground,” said Konrad Bleyer-Simon, a research fellow at the European University Institute. “They tried to fabricate proof for their propaganda.”

Domestic disinformation dominated. Szilárd Teczár, a journalist with the Hungarian fact-checking organisation Lakmusz, estimates that at least 90% of disinformation circulating before the vote originated in Hungary — not in Moscow. The wider Fidesz ecosystem, including media outlets under its influence and proxy organisations such as the National Resistance Movement and Megafon, an influencer network, produced the majority of the content.

Russian interference existed but was less potent than feared. The Kremlin-linked operation Matryoshka fabricated a fake video purportedly from French outlet Le Monde, falsely claiming a Ukrainian artist had been poisoning Hungarian dogs. Another Russian actor, Storm-1516, published fake news articles claiming Magyar had insulted US President Trump — a story that gained traction on X. Alice Lee, an analyst at NewsGuard, described Russia’s approach as the “classic playbook” for election interference.

Critically, many of those Russian campaigns ran in English rather than Hungarian. They also ran primarily on X — a platform Teczár describes as “not that important” for Hungarian political discourse, where Facebook and YouTube dominate. The mismatch undercut their reach significantly.

New advertising restrictions from Meta and Google, banning political advertising in the EU, forced Fidesz to change tactics. The party created private Facebook groups — including “Fighters Club” with over 61,000 members and “Digital Civic Circles” with over 100,000 members — to organise supporters, boost posts, and coordinate digital amplification outside the formal ad system. Both groups collectively ran over 4,000 Meta ads encouraging users to join them, according to Political Capital, a leading Hungarian NGO. Paid ads from these groups reached at least 100,000 people in a single week, amplifying fabricated stories about opposition politicians being drafted for the Ukraine war.

Booking.com Breach: Millions of Travellers at Risk

Booking.com confirmed on 13 April 2026 that unauthorised third parties accessed customer booking data, exposing names, email addresses, physical addresses, phone numbers, and any information guests had shared with their accommodations. No payment data was accessed. The number of affected customers has not been disclosed.

The platform, which handles hundreds of millions of bookings per year and lists over 30 million properties globally, began emailing affected customers over the weekend with a stark warning: “We recently noticed suspicious activity affecting many reservations, and we immediately took action to contain the issue.” As a precaution, Booking.com reset all reservation PINs for affected accounts.

Communications lead Sage Hunter confirmed the breach in a statement: “At Booking.com, we are dedicated to the security and data protection of our guests. We recently noticed suspicious activity involving unauthorised third parties accessing some of our guests’ booking information. Upon discovering the activity, we took action to contain the issue. We have updated the PIN for these reservations and informed our guests.”

The company declined to answer questions about the scale of the breach or how attackers gained entry. That opacity is already drawing criticism. The Register noted this is not Booking.com‘s first rodeo. In 2021, Dutch regulators fined the company €475,000 (£408,000) after a breach exposed personal data — including credit card details — from more than 4,000 customers, following a supply chain compromise of hotel staff logins.

The more immediate danger is phishing. Stolen booking data — with real names, reservation dates, and message histories — creates a near-perfect template for convincing scam emails. Several Reddit users reported being targeted by scammers who appeared to have their private reservation details. Booking.com’s own platform messaging system has been exploited before, turning legitimate conversations between guests and hotels into channels for payment fraud. The company has reported the breach to the Dutch privacy regulator. Under GDPR, it now faces a formal investigation and potential additional fines. Given Booking Holdings — the $137 billion (€126.1 billion) parent company — has not disclosed the breach’s full scope, regulators are unlikely to accept this level of ambiguity quietly.

Patients Sue Over AI Secretly Recorded Doctor Visits

A proposed class-action lawsuit filed on 10 April 2026 in federal court in San Francisco names two of California’s largest healthcare providers — Sutter Health and MemorialCare — over their use of an AI transcription tool called Abridge. The plaintiffs allege their private physician-patient conversations were recorded, transcribed, and transmitted to external servers — without their knowledge or meaningful consent.

Abridge, which bills itself as an “ambient clinical documentation” platform, operates by listening to patient-doctor conversations, automatically generating transcripts, and producing draft clinical notes for health records. The technology is now deployed in over 250 health systems across the United States. Sutter Health began its partnership with Abridge two years ago.

The complaint is detailed and damaging. The recordings allegedly captured medical histories, symptoms, diagnoses, medications, and treatment discussions — among the most sensitive categories of personal data. The plaintiffs claim they received no clear notice that conversations would be recorded by an AI platform, transmitted beyond the clinical setting, or processed by a third-party system. The lawsuit alleges violations of California’s privacy law, medical information confidentiality law, unfair business practices law, and a federal wiretapping statute.

Sutter Health spokesperson Liz Madison said: “We take patient privacy seriously and are committed to protecting the security of our patients’ information. Technology used in our clinical settings is carefully evaluated and implemented in accordance with applicable laws and regulations.” MemorialCare and Abridge did not respond to requests for comment.

The case lands in a context of rapid, arguably reckless AI deployment across the healthcare sector. Roughly six in ten American physicians show at least one symptom of burnout, with documentation and billing demands widely cited as the primary driver. AI transcription tools like Abridge promise to significantly reduce that burden — and have gained rapid adoption partly because of it. But speed of adoption does not override the requirement for patient consent. Under HIPAA, any third-party system that retains transcriptions requires a formal Business Associate Agreement. Whether those agreements exist and whether patients were informed are precisely what the court must now determine.

This is not a standalone case. California courts are also hearing a separate lawsuit against Otter AI, which faces allegations of “deceptively and surreptitiously” recording private conversations without consent via its integration with Zoom and Microsoft Teams. The pattern is consistent: AI tools deployed without adequate disclosure, in settings where the informational stakes are highest.

TF Summary: What’s Next

These three stories share a common architecture. A powerful actor — whether a political party, a global travel platform, or a healthcare provider — deploys technology without adequate transparency or accountability, and the people most affected are the last to know. In Hungary, fabricated evidence weaponised legitimate media channels and private social platforms to undermine a democratic contest. The result — Magyar’s victory — suggests voters ultimately resisted. But the playbook is documented, and it will be reused.

For Booking.com, the path forward runs directly through the Dutch Data Protection Authority and potentially the wider EU regulatory apparatus. A company with the platform’s scale and history cannot continue to treat transparency about breaches as optional. For Sutter Health, MemorialCare, and Abridge, the California federal courts will now determine whether the efficiency benefits of AI documentation justify what the plaintiffs describe as a systematic failure to respect patient privacy. Whatever the outcome, this case will set a significant precedent for AI deployment in clinical settings across the United States — and likely beyond.

— Text-to-Speech (TTS) provided by gspeech | TechFyle