Courts slow the crackdown while Google cranks the AI dial to eleven

Two stories collide in the same news cycle and reveal something weird about the tech world: governments want to restrain social media, while Big Tech races to build tools that generate entire realities on demand. One hand reaches for the brakes. The other stomps the accelerator.

A federal judge in Virginia halts a law that limits teens’ social media time. Meanwhile, Google unveils a new AI image engine in Gemini that turns text into polished visuals in near-real time. One debate centres on safety and rights. The other is on capability and competition.

Together, they paint a portrait of a digital ecosystem that refuses to slow down — even as regulators try to impose guardrails.

What’s Happening & Why This Matters

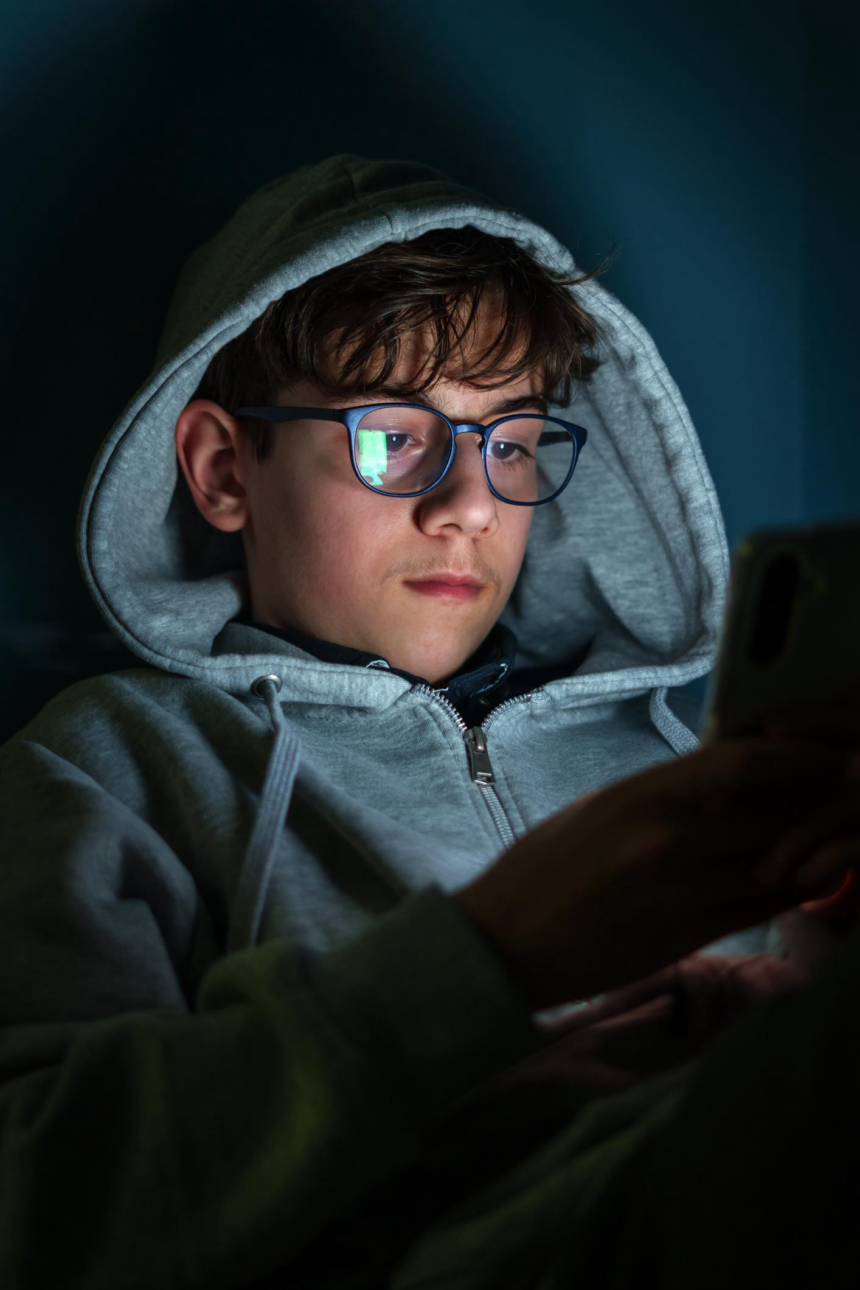

Court Blocks Virginia’s Teen Social Media Limits

Virginia lawmakers attempt a blunt solution to a subtle problem: limit how long minors can use social platforms. Senate Bill 854 caps usage at one hour per day for users under 16 and requires companies to verify age. A federal judge hit pause on the law that calculates teenage screen time.

The ruling stems from a lawsuit by NetChoice, a tech lobbying group representing companies including Meta, Google, X, Reddit, and Netflix. The organisations that force age verification threaten privacy and free speech.

Judge Patricia Tolliver Giles acknowledges the intent behind the law but rules that the method crosses constitutional lines. The decision stresses that protecting youth does not permit the government to trample First Amendment rights.

“The Court rerecogniseshe commonwealth’s compelling interest in protecting its youth,” she writes, “however, it cannot infringe on First Amendment rights.”

The law would have required platforms to determine whether users fall under the age threshold using “commercially reasonable methods.” In practice, that means collecting sensitive identity data from minors — precisely what critics say creates new risks.

NetChoice co-director Paul Taske delivers the blunt version of that argument. Age-verification databases, he warns, become “a honeypot for cybercriminals and predators.”

Here lies the paradox. To protect children online, platforms must gather more personal data about children. Regulators try to reduce harm but risk manufacturing a different kind of harm in the process.

Recent court decisions across the U.S. suggest a pattern: sweeping restrictions struggle to survive constitutional scrutiny. Similar laws in Louisiana and Ohio already face injunctions, while a California law targeting addictive feeds survives legal challenge. The patchwork is messier by the month.In practical terms, the ruling buys tech companies time — and cues that nationwide restrictions on social media usage face a steep legal climb.

Google Drops a Visual AI Power Upgrade

While lawmakers debate the number of minutes per day, Google has upgraded its capabilities to generate entire worlds in seconds.

The company released Nano Banana 2 — formally called Gemini 3.1 Flash Image — as the default image generator across its ecosystem. That includes the Gemini app, Search, AI Studio, Vertex AI, and Flow.

Nano Banana started as a curious experiment. It now appears to be a central pillar of Google’s AI strategy.

The new model promises higher fidelity, faster generation, and fewer visual glitches. AI-generated images often betray themselves through warped text or odd object placement. Google claims those issues shrink dramatically with the new release.

Nano Banana 2 draws on the Gemini 3.1 language model, providing stronger context when rendering scenes. It can handle up to five consistent characters in one image and manage workflows involving as many as fourteen objects without turning the result into visual soup.

The upgrade also boosts texture quality, lighting realism, and text accuracy — a long-standing Achilles’ heel for image models. Google even positions it as capable of producing clean infographics, educational visuals, and storytelling content without heavy editing.

Resolution support stretches from small square outputs to full 4K widescreen images. In other words, the system scales from meme creation to professional production.

Perhaps the most telling: Google retired previous versions. Nano Banana 2 replaces both the standard and Pro models across the board.

That is confidence. Companies rarely force migration unless the new tool outperforms the old across most use cases.

The Story: Control vs. Capability

The developments look unrelated at first glance. One concerns teenagers and screen time. The other concerns image generation. Yet both revolve around a central tension: who controls the digital environment — governments or platforms?

Virginia’s law represents a regulatory instinct: limit exposure to reduce harm. Google’s release represents the industry instinct: build more powerful tools and let users decide how to use them.

Neither approach solves the whole problem.

Restrictive laws can backfire. Overpowered tools can amplify misinformation, deepfakes, and manipulation. Both sides chase outcomes without fully understanding second-order effects.

There is also a generational dimension. Teenagers use social platforms not merely for entertainment but also for identity, socialisation, and community. Limiting access risks isolating them from digital public spaces. At the same time, unregulated environments expose them to addictive design patterns and harmful content.

Meanwhile, AI image systems blur the boundary between reality and fabrication. What happens when the same teens grow up in a world where images can no longer serve as reliable evidence?

The future looks less like a simple “screen time problem” and more like a cognitive environment problem.

Competitive Stakes in the AI Race

Google’s release also highlights the fierce competition among AI labs. OpenAI, Anthropic, Meta, and others sprint to dominate multimodal systems — tools that handle text, images, audio, and video as a unified medium.

Image generation sits at the centre of that race because visuals drive engagement. Social platforms thrive on them. Advertising depends on them. Political messaging weaponises them.

A faster, more accurate generator does not merely improve creative workflows. It transforms how information spreads.

If one company’s tool becomes the default creative engine across phones, browsers, and enterprise software, that company gains enormous influence over digital expression itself.

Google appears determined to ensure that the engine runs on Gemini.

TF Summary: What’s Next

The Virginia ruling suggests that courts will continue to act as referees in the battle between youth protection laws and constitutional rights. Expect more state-level attempts, more lawsuits, and a growing pile of conflicting decisions. A nationwide standard seems distant.

At the same time, AI capabilities accelerate with almost comic indifference to regulatory timelines. Tools like Nano Banana 2 expand what individuals can create instantly, raising questions about authenticity, trust, and digital literacy.

MY FORECAST: Governments attempt to control usage. Tech firms expand possibilities. Society sits in the middle, scrolling nervously. If history offers any clue, innovation will outrun regulation — then force a reset when consequences accumulate. Until then, the internet becomes simultaneously safer on paper and stranger in practice.

— Text-to-Speech (TTS) provided by gspeech | TechFyle