AI products get more personal, agentic, and far more willing to touch your files, health data, and daily routine.

Consumer AI spent the last year learning how to answer questions faster. Today, it is a bigger job. The week’s product news from Google and Manus shows where the market is heading next: AI that knows your context, uses your apps, reads your records, and completes real tasks instead of stopping at a neat paragraph.

That change matters because it integrates AI closer to the centre of daily life. Google’s Gemini expands Personal Intelligence across Search, the Gemini app, and Chrome in the U.S. Fitbit is also turning its AI health coach into a deeper wellness layer with better sleep analysis and optional medical-record links. At the same time, Manus launched a desktop path that lets its agent work directly with local files and apps through the command line. The moves came from different companies, yet they point in the same direction: the next wave of AI is less about chat and more about action.

In plain English, AI is moving from “tell me something” to “help me do something.” That sounds useful. It also raises bigger questions about privacy, trust, permissions, and how much context people want to hand over. AI assistants used to live outside the house and knock at the door. They want the keys, the calendar, the photo library, and the inbox. In Fitbit’s case, it even wants your lab results. That is where the fun, and risk, begin.

What’s Happening & Why This Matters

Gemini Closer to a True Personal Assistant

Google said on 17 March that Personal Intelligence is expanding in the U.S. across AI Mode in Search, the Gemini app, and Gemini in Chrome. The pitch is simple: connect your Google apps, and Gemini gives answers that fit your own history, habits, and stored information. Google says that can mean shopping suggestions based on past purchases, travel plans built from old confirmations and photos, or more useful help without forcing users to re-explain their life every time they type a prompt.

Google framed the feature in a very direct way. In its post, the company said, “Personal Intelligence allows you to securely connect the dots across your Google apps” to deliver responses that are uniquely relevant. That wording matters because it tells users what the feature really is: a context engine. It is not just smarter writing. It is AI with memory hooks into the Google stack.

Google said Personal Intelligence is available in the U.S. for free-tier users in AI Mode in Search, while rollout in the Gemini app and Chrome is also starting for those users. Earlier this year, Google positioned the feature as a beta for Google AI Pro and Ultra subscribers. The week’s change turns it from a premium preview into a wider consumer feature. That alone tells you Google sees this as core product behaviour, not a side experiment.

At the same time, Google kept stressing user control. The company said people can choose which apps to connect and can turn those connections on or off at any time. On the support side, Google lists the current app pool as Google Photos, YouTube, Google Workspace services such as Gmail, Calendar, Drive, Docs, Sheets, Slides, Keep, Tasks, Chat, and Meet, plus Google Search services such as Maps, Shopping, News, Flights, and Hotels. That is a huge surface area. It gives Gemini far more context. It creates a bigger privacy conversation.

That conversation gets sharper once you read Google’s help pages. The company says data shared between Gemini and connected apps can include emails, files, events, photos, videos, and location information, and that the data may relate to sensitive topics such as race, religion, health, or other confidential information. Google also says that connected-app data is used to personalise the Gemini experience. Gemini provides tailored recommendations and improves Google services, including training generative AI models. That is a useful disclosure. It is a reminder that “personal” AI works because it sees more of your life.

Google tried to soften that concern in its earlier Personal Intelligence post. There, it said Gemini “doesn’t train directly on your Gmail inbox or Google Photos library.” It said the system uses those sources to answer you, while model improvement relies on more limited data, such as prompts and responses after filtering or obfuscation steps. That is an important distinction. Yet for many users, the practical question will stay the same: do I trust the assistant enough to hand it the map of my life?

Manus Turns AI From Assistant Into Operator

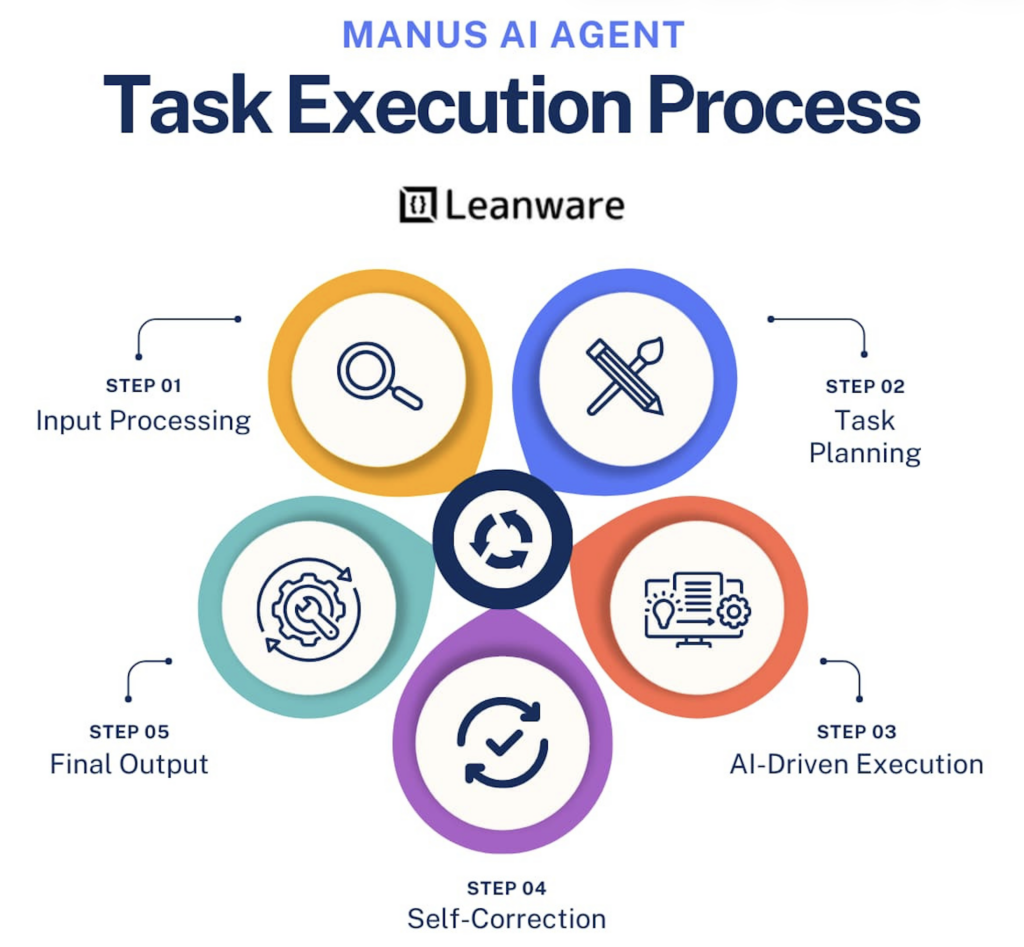

Manus made a different move, but the same theme runs through it. In a new desktop feature called My Computer, the company said its agent can leave the cloud to work with local files, tools, and applications. Manus says the desktop app executes command-line instructions in a user’s terminal, which lets it read, analyse, and edit local files and launch local apps. That is a bigger step than “summarise the PDF.” It moves the product into the territory of direct system operation.

The examples in Manus’s own launch post show the kind of work it wants to own. A florist asks to organise a large photo folder. An accountant asks to rename hundreds of invoices. A colleague had Manus build a real-time meeting translation and subtitle app in Swift on a Mac, and Manus said the process took about 20 minutes with no manual code writing. The product pitch is not subtle. This is an AI agent that wants to do desktop labour.

The company went even further. Manus says My Computer can use idle local compute, including a GPU or an always-on Mac mini, to train machine-learning models or run inference. It describes a setup where a user can send an instruction from a phone while out of the house and let Manus complete the work on their home machine. That is an interesting blend of cloud intelligence and local execution. It also indicates where agent products want to go next: distributed, persistent, and tied to the user’s own hardware.

There is another layer here. The Manus homepage says, “Manus is now part of Meta — bringing AI to businesses worldwide.” If that branding stays in place, the desktop integration gives Meta-linked AI more reach into real workflow automation, not just content generation. That places Manus in a more crowded fight with coding agents, workflow bots, and productivity assistants, all of which want to become the operating layer between humans and software.

The upside is clear — repetitive digital chores waste time. File sorting, batch renaming, local script work, small app creation, and setup tasks are dull and expensive in human hours. The downside is just as clear. Once an agent can touch your files and apps, permissions are serious business. A bad answer in chat wastes a minute. A bad action on your machine can waste a day. Manus is pitching power. Power always comes with sharper edges.

Fitbit Turns AI Health From Tracking Into Interpretation

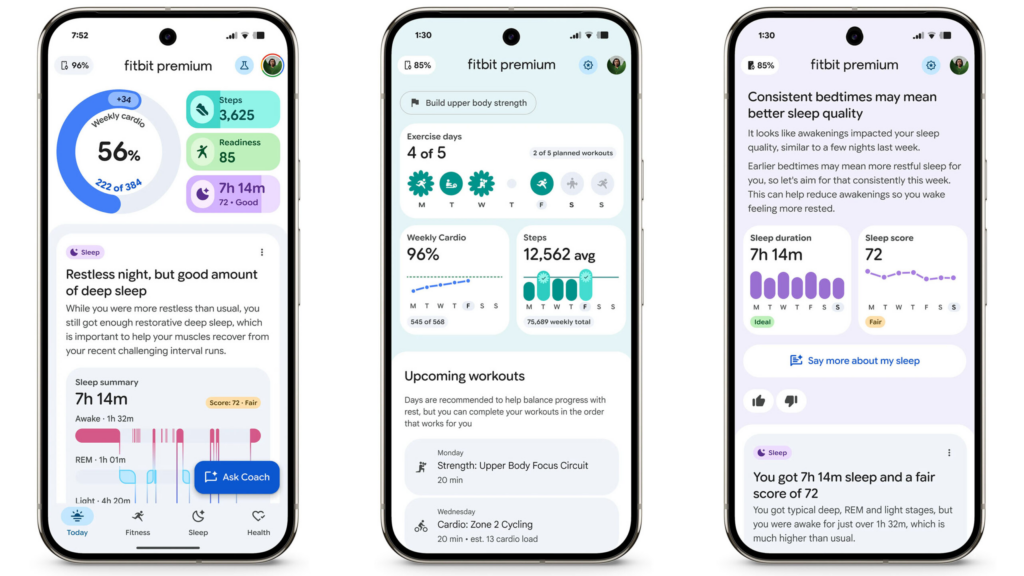

Fitbit added the most sensitive twist of the three stories. Google said Fitbit’s personal health coach gets updates across sleep science, metabolic research, and medical record linking. The goal is to move the coach past basic wellness metrics and into more tailored guidance. Florence Thng, Google’s Health Intelligence Product Management Director, wrote that the company is taking “the next step” by improving sleep understanding and integrating clinical history for a fuller view of well-being.

The sleep update is concrete. Google says Fitbit is launching an additional 15% increase in sleep staging accuracy for Public Preview users. It says the models do a better job of distinguishing between trying to sleep and actually sleeping, while capturing interruptions, naps, and stage transitions in a way that more closely matches clinical gold-standard measurements. In product terms, that means Fitbit wants the score to be less of a rough grade and more of an actionable coach.

Then comes the bigger activity. Starting next month, for Public Preview users in the U.S., Google says Fitbit users will be able to link medical records into the Fitbit app: lab results, medications, and visit history. Google says the service will work with partners including b. well and CLEAR, and that identity verification will use IAL2-certified standards with a selfie and valid ID. That is a lot more than a step counter with nice charts. It is AI health software stepping onto clinical turf, even if the company still labels it as a wellness product.

Google also explained why it wants those records. It said that when the coach understands your medical history, its guidance is safer, more relevant and more personalised. The company gave a sample prompt on improving cholesterol, in which the coach could summarise lab trends and provide wellness information based on medical history and wearable data. That sounds useful. It is right in the part of AI where users will ask a hard question: when does “coach” start to feel like “quasi-clinician”?

Google tried to draw a firm line there. It said medical records are securely stored with Fitbit, are not used for ads, and are under the user’s control for use, sharing, or deletion. It added a disclaimer: Fitbit is not intended to use medical records to diagnose, treat, cure, prevent, or monitor any disease or condition, and users should consult a healthcare professional before making health changes. That legal language matters because the product is creeping toward medical advice, even when the company says it is not medical care.

TF Summary: What’s Next

This week’s AI product news tells a clean story. Google wants Gemini to know you better. Manus wants its agent to do more work for you. Fitbit wants AI to interpret your body with more context and more confidence. In each case, the product gets stronger by gaining more access: to your apps, your files, your history, your device, and your health data. That makes the tools more useful. It further strives to establish trust in the real product.

MY FORECAST: AI leaders will spend the rest of 2026 racing toward two end goals at once. First, they will build agents that can act across more surfaces with less friction. Second, they will wrap those agents in tighter privacy controls, permission layers, and audit trails because users will demand proof, not vibes. The winners will not just build the smartest model. They will build the assistant that people feel safe letting into their real lives.

— Text-to-Speech (TTS) provided by gspeech | TechFyle