Meta finally admits that “just give the kid a phone” is not a safety strategy.

Parents have been stuck with a ridiculous choice for years. Either keep kids off messaging apps and look like a digital tyrant, or let them in and hope the internet behaves itself for once. Spoiler: it does not.

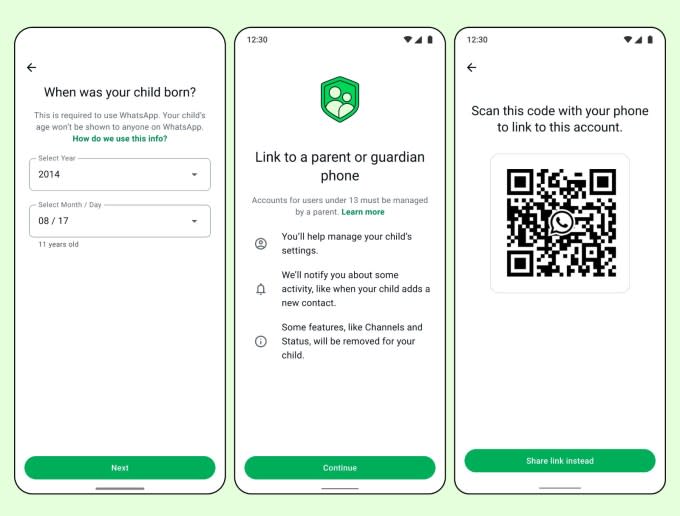

WhatsApp is rolling out parent-managed accounts for children under-13 (U13). The new setup limits the app to calling and messaging, blocks a long list of advanced features, and gives parents direct control over privacy settings, contact approvals, and certain account changes.

This is not a tiny product tweak. It is Meta acknowledging that the old social-media logic — launch first, patch harm later — does not look so charming when pre-teens are involved. The real question is whether the controls are strong enough to matter or polished enough to calm adults.

What’s Happening & Why This Matters

WhatsApp’s Child-Specific Version for U13 Users

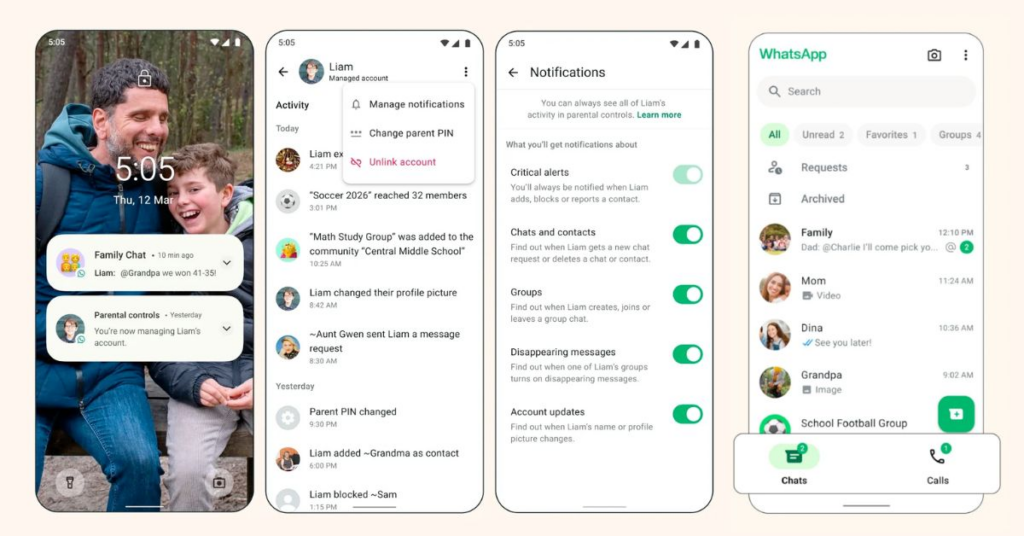

WhatsApp’s new parent-managed accounts are designed for U13 kids and are meant to offer a narrowed version of the app. The accounts support only calling and messaging, while privacy settings can be changed only by a parent or guardian.

That matters because WhatsApp is not trying to pretend children will stay off messaging platforms forever. It is trying to build a fenced version of the experience instead. That is a more realistic move than the usual corporate fantasy where kids wait patiently until the approved birthday arrives, then stroll online like tidy little adults.

The child-focused setup blocks a long list of features that would create more risk or more confusion. Pre-teens on the accounts cannot use Meta AI, status updates, chat lock, app lock, location sharing, view-once messages, disappearing messages, or linked devices.

That list is worth pausing on. It removes tools that can hide conversations, blur oversight, spread content more socially, or widen access across multiple devices. In other words, WhatsApp is not merely trimming the interface. It is cutting away escape hatches.

The Safety Model: Friction Not Freedom

The most important part of the rollout is not the feature ban list. It is the way contact and messaging approvals work.

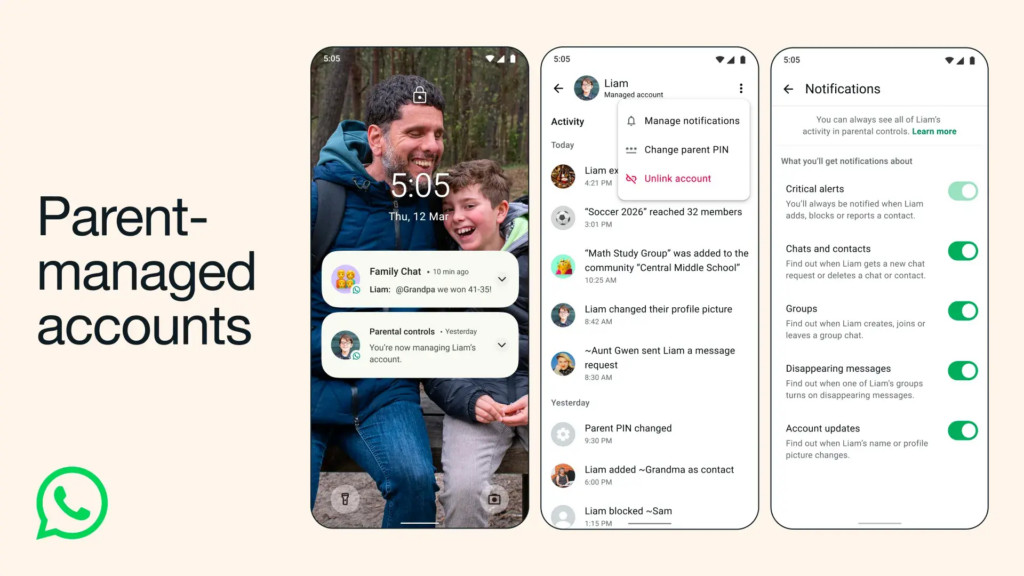

Only saved contacts can send messages to a parent-managed account, see the child’s profile photo, read account info, or view last-online status. If a child receives a message from an unknown contact or gets invited to an unknown group, the item goes into a Requests folder for parental approval. Parents are then notified on their own phones. They are notified when a child adds a new contact or leaves a pre-approved group chat.

That is not subtle. WhatsApp is deliberately building friction into interactions that could expose kids to strangers or unvetted groups. And frankly, friction is underrated.

The tech industry spent a decade worshipping frictionless growth. Frictionless signup. Frictionless sharing. Frictionless engagement. That philosophy works beautifully if your only metric is user growth. It works much less beautifully when the users are children, and the frictionless path leads straight to bullying, grooming, spam, or worse.

WhatsApp’s new design says something simple: for younger users, less freedom in the app may actually mean more freedom for the family outside it.

Parents Get the PIN. Kids Get the Request Button.

WhatsApp is also using a six-digit parent PIN as the control point for key privacy and settings changes. During setup, parents create the PIN and then enter it again on the child’s phone to complete the managed-account pairing. Kids can still open their profile, tap Learn more, and visit the Parental controls section to request changes, but those changes still require the parent PIN.

That setup is clever because it gives the child some visibility into the system without handing them the keys to the castle. The product is saying: you can ask, but you cannot silently override.

This matters because many parental-control systems fail for one of two reasons. Either they are too weak and easy to bypass, or they are so rigid that families stop using them altogether. WhatsApp appears to be trying to strike a middle ground where kids can understand the rules and parents can still enforce them.

Will that work in every house? Of course not. No software feature can repair bad family communication, lazy supervision, or a pre-teen with elite-level determination and a second handset hidden in a backpack. But a PIN-controlled request system is still more sensible than pretending younger users can self-regulate their way through the internet with zero adult structure.

A Global Push on Child Safety

The rollout does not exist in a vacuum. It is in a wider climate where governments and regulators are getting sharper about children’s online access, messaging risk, and age controls.

Other files already uploaded in the thread show how countries such as Australia are widening age verification rules across chatbots, adult games, porn sites, app purchases, and search engines. At the same time, international debates continue around under-16 (U16) social media bans and stronger parental oversight.

That context matters because WhatsApp is not making the choice purely out of parental kindness. Platforms across the world are under growing pressure to show they can protect younger users before lawmakers decide to do the job for them in much harsher ways.

Meta has an extra reason to look carefully here. Another file in the conversation documents how AI chatbots from major platforms have pointed vulnerable users toward illegal online casinos, while others have stumbled upon harmful or exploitative content. When policymakers see stories like that, patience wears thin.

So WhatsApp’s parent-managed account is both a product launch and a preemptive argument. It lets Meta say: look, we are building age-specific controls, limiting risky features, and giving parents more authority. Whether regulators find that convincing is another matter. But the company clearly knows the mood has shifted.

The Feature Looks Useful, but It Will Still Face the Same Old Problems

Let’s not get carried away and crown this a total victory over the internet.

The system still depends on a few fragile things. First, parents have to actually set it up properly. Second, the child needs to keep using that managed phone and not migrate to another unmonitored device or app. Third, families need to stay engaged enough to review contact requests and group invitations instead of tapping through them like sleepy airport prompts.

There is a philosophical question hiding here. The controls reduce risk, but they also normalise a model where platforms mediate family oversight through app design. Some parents will welcome that. Others will find it intrusive, clunky, or insufficient.

And the biggest weakness is painfully familiar: kids do not live in one app. They constantly move between messaging, gaming, video, search, and social platforms. A strong WhatsApp setup may help inside WhatsApp. It does not magically secure the rest of the digital house.

Still, that does not make the feature pointless. It makes it partial. And partial protection is still better than handing a pre-teen the full adult app and wishing them luck.

TF Summary: What’s Next

WhatsApp’s new parent-managed accounts for U13 users limit the app to calling and messaging, block higher-risk features such as disappearing messages, linked devices, Meta AI, and location sharing, and give parents approval power over unknown contacts, group invites, and key settings changes. The system also relies on a six-digit PIN and real-time notifications to keep guardians in the loop.

MY FORECAST: This is the start of a redesign wave, not the end of it. Messaging apps will keep building child-specific modes because governments, schools, and parents are no longer willing to accept full-access adult products as the default for younger users. Expect stronger account segmentation, tighter parental approvals, and more pressure for linked safety features across Meta’s wider family of apps. The companies that adapt fastest will look “responsible.” The ones that do not will get regulated until they do.

— Text-to-Speech (TTS) provided by gspeech | TechFyle