Digital curfews, screen time caps, and potential bans put Britain at the centre of the global youth tech debate.

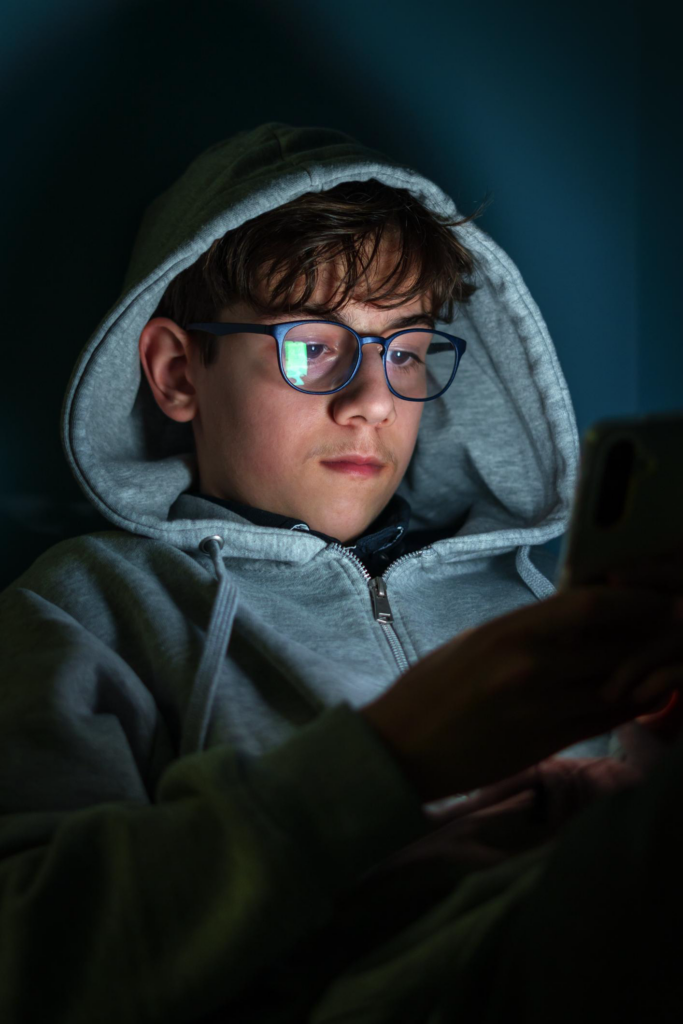

The United Kingdom is about to run a real-world experiment on teenagers and social media. Not a think tank report or a survey. An actual pilot.

Prime Minister Keir Starmer’s government has launched what it calls the “world’s most ambitious consultation on social media.” Hundreds of teenagers will test overnight digital curfews, daily screen limits, and even full social media bans.

The goal appears simple: reduce harm. The stakes are much bigger.

If Britain tightens restrictions on under-16 (U16) social media access, it could prompt changes to global platform policies. Australia already passed a similar ban. The UK may follow. Tech companies are watching closely. So are parents.

What’s Happening & Why This Matters

A Three-Month Consultation With Real Consequences

The government has opened a three-month consultation that could lead to an outright ban on social media for U16s. Ministers noted they are ready to toughen laws only six months after introducing new child protection measures under the Online Safety Act.

Officials state there is “growing agreement that more needs to be done.” That phrasing matters. It suggests momentum, not hesitation.

The consultation asks difficult questions. Should there be a minimum age for social media? If yes, what age? Should platforms disable addictive features such as infinite scrolling and autoplay? Should overnight curfews apply? How strong should age verification be?

It also stretches beyond Instagram and TikTok. Lawmakers want to examine AI chatbots and gaming platforms such as Roblox. The debate includes the wider digital ecosystem, not only social feeds.

This is a shift. The focus moves from content moderation to structural design.

The Teen Trial: Ban, Cap, or Curfew

The first pilot involves roughly 150 teenagers aged 13 to 15. Researchers will test three conditions:

- Total denial of social media access

- One-hour daily limits

- Overnight screen curfews

Researchers will monitor sleep patterns, mood, and physical activity. This transforms a political debate into a behavioural experiment.

Data could shape national policy. Evidence, not ideology, will drive the next move. At least in theory.

The pilot introduces an unusual dynamic. Government policy will measure teenage well-being directly. That raises questions about enforcement and privacy. However, it also signals seriousness.

Parents vs. Platforms

Public pressure fuels this policy push.

Smartphone Free Childhood rallied around 250,000 supporters to contact their Members of Parliament demanding stricter rules. Campaign co-founder Joe Ryrie frames the issue bluntly: “Ordinary mums and dads are fed up with trying to out-parent algorithms built by trillion-dollar companies.”

That statement captures the emotional undercurrent. Parents feel outmatched by engagement-driven design.

On the other side, some child safety groups urge caution. The NSPCC warns that a blanket ban could drive teens into darker, unregulated corners of the internet. The 5Rights Foundation argues that a ban risks letting platforms off the hook for systemic design flaws.

Andy Burrows of the Molly Rose Foundation advocated for evidence-based reform rather than simplistic solutions. He states that child safety must be the non-negotiable cost of doing business.

The debate splits along a fault line. Ban access. Or reform design.

Lobbying and Power Dynamics

Access to policymakers reveals another layer of complexity.

Reports show technology companies and their lobbyists attended at least 639 meetings with ministers over two years. Child safety campaigners attended 75.

That disparity matters. Regulation rarely unfolds in a vacuum. Corporate influence shapes outcomes.

Meta declined to comment. TikTok and X did not respond publicly. Silence sometimes speaks louder than press releases.

The consultation places pressure on platforms. If lawmakers set age boundaries or mandate feature restrictions, product design may change at the code level.

Addictive Design Under Scrutiny

Infinite scroll. Autoplay. Algorithmic amplification.

The features maximise engagement. They also maximise time spent online. Critics argue they disrupt sleep cycles and mental health.

The UK government is considering requiring platforms to disable certain features for minors. That approach targets the mechanism rather than the user.

Such measures would not only affect teenagers. They could redefine how platforms architect user journeys globally.

AI chatbots also enter the conversation. Should children interact freely with generative AI systems? Policymakers are unsure.

The question extends beyond screen time. It touches cognitive development and emotional resilience.

Enforcement Challenges

Age verification is a core hurdle.

Platforms currently rely on self-reported birthdates or partial verification. A stricter framework could require biometric checks or third-party verification services.

However, enforcement raises privacy concerns. Parents want protection. Teens want autonomy. Platforms want frictionless onboarding.

Balancing the demands will test regulators.

The International Ripple Effect

Australia already implemented U16 social media restrictions. Britain may follow. Other European nations observe carefully.

If the UK enacts a ban or mandates structural changes, global companies might implement uniform standards rather than region-specific policies.

That ripple could affect billions of users.

Technology secretary Liz Kendall acknowledges the complexity. She states parents are grappling with screen time, device age, and online exposure. The consultation seeks input directly from children and families.

The policy aims to adapt to rapid technological change.

Cultural Impact

This debate transcends regulation. It questions digital childhood itself.

When does connectivity empower? When does it harm?

Social media delivers community, creativity, and access to information. It also exposes users to comparison, harassment, and harmful content.

Banning platforms might reduce exposure. Yet it may also isolate teens from peer networks.

The pilot may uncover nuanced outcomes. Perhaps partial limits improve sleep without eliminating connection. Perhaps curfews restore boundaries without total prohibition.

The experiment matters because it acknowledges complexity.

TF Summary: What’s Next

The UK is piloting U16 social media restrictions through real-world trials. Policymakers are weighing bans, time caps, curfews, and design reform. Parents demand stronger safeguards. Advocacy groups caution against simplistic answers. Platforms face mounting pressure.

MY FORECAST: Britain will introduce structured age boundaries combined with mandatory design changes rather than a total ban. AI chatbot restrictions and stronger age verification will follow. Other European nations will study the data and move quickly. The global social media landscape may shift from engagement-first to safety-first design.

— Text-to-Speech (TTS) provided by gspeech | TechFyle