Meta is facing turbulence on multiple fronts. Instagram’s moderation tools failed to contain an influx of explicit content, forcing the company into damage control. At the same time, internal leakers are being hunted down as the company tightens security around confidential projects. Meanwhile, Meta is developing a new AI-powered app that could rival existing AI assistants. The company’s future plans are tenuous, with regulatory scrutiny increasing in Europe and trust issues brewing internally. Can Meta manage these setbacks while pushing forward with its artificial intelligence expansion?

Instagram Struggles with a Surge of Graphic Content

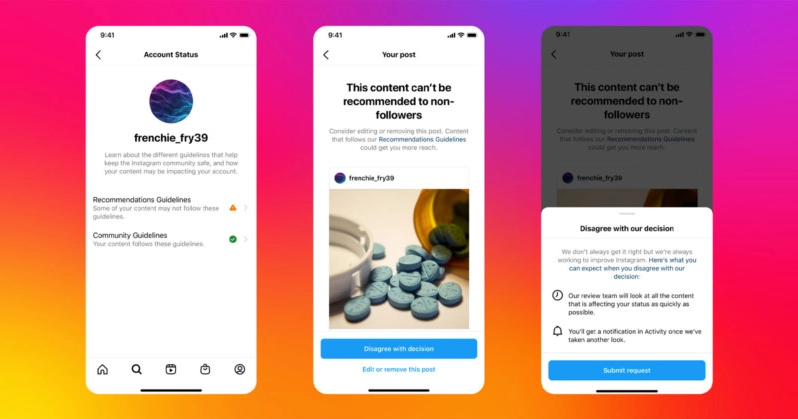

Meta is facing backlash after Instagram users reported an unexpected flood of violent and explicit content. The moderation systems that usually filter out harmful material failed, allowing disturbing images to spread across the platform. Users took to social media to voice their frustration, questioning how such content made it through Meta’s filters.

The company acknowledged the problem and quickly removed the posts, but the incident has renewed concerns about its ability to manage content moderation at scale. Critics argue that AI-driven moderation is still unreliable, often allowing harmful content to slip through while mistakenly flagging harmless posts.

Instagram has had previous issues with content moderation, which has led to scrutiny from regulators and user advocacy groups. This latest failure will pressure Meta to improve its filtering technology and invest in better safety measures.

Meta Fires Employees for Leaking Internal Information

Meta has terminated around 20 employees to stop leaks from sharing confidential company details. The firings came as CEO Mark Zuckerberg grew increasingly frustrated with internal information making its way to the public.

During a company-wide meeting, Zuckerberg reportedly expressed his disappointment, stating, “We try to be open, but when everything leaks, it makes it hard to trust the process.” Shortly after, an internal memo warning employees about strict consequences for leaking information was leaked to the media.

Meta has struggled with internal leaks before, most notably when former employee Frances Haugen shared thousands of internal documents in 2021, triggering a round of congressional hearings. The recent firings suggest the company is taking a harsher stance on protecting internal discussions, though stopping leaks entirely may be impossible.

Meta Prepares to Launch a Standalone AI App

Meta is pushing deeper into AI with plans to launch Meta AI, a standalone chatbot app designed to compete with OpenAI’s ChatGPT and Google’s Gemini. The app will provide conversational AI services and build on the Meta AI assistant already available inside Facebook, Instagram, and WhatsApp.

CEO Mark Zuckerberg has committed between $60 billion and $65 billion this year to expanding Meta’s AI capabilities, intending to make Meta a leader in generative AI. The standalone app is expected to be part of this push, giving users a dedicated space to interact with Meta’s AI technology.

However, the company is already facing legal scrutiny in Europe, where regulators have raised concerns about how Meta collects user data to train its AI models. Privacy watchdogs have filed complaints, arguing that users are not given enough control over how their information is used.

Joel Kaplan, Meta’s global policy chief, recently stated that European regulations make it harder for companies to develop AI tools, warning that restrictive policies could slow innovation. As regulatory questions linger, Meta’s AI expansion faces more resistance in key markets.

TF Summary: What’s Next

Meta is dealing with multiple problems at once — user complaints about explicit content on Instagram, internal leaks leading to employee terminations, and legal challenges over its AI expansion. The company’s upcoming Meta AI app could give it a stronger foothold in the AI space, but privacy concerns and regulatory pressures may slow its progress. With growing competition in AI and continued scrutiny of its moderation policies, Meta’s ability to manage these issues effectively will be closely watched.

— Text-to-Speech (TTS) provided by gspeech