Meta’s newest teen safety tool promises early warnings—but critics fear panic, privacy risks, and algorithmic blame-shifting.

Instagram wants parents to know when their teens spiral online. The company plans to alert parents if under-16 (U16) users repeatedly search for suicide or self-harm content. The move lands amid lawsuits, regulatory pressure, and a growing public belief that social media harms young people. Meta frames the feature as protection. Critics call it reactive damage control with sharp edges.

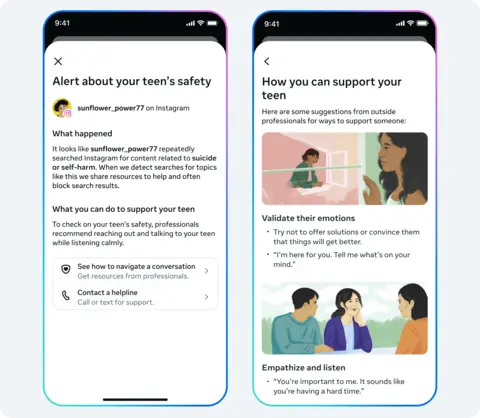

The update targets accounts enrolled in supervision tools. Parents receive notifications via email, text, WhatsApp, or in-app messages. Instagram also promises guidance resources so families can respond calmly rather than panic. The rollout begins in the United States, the United Kingdom, Australia, and Canada, with expansion planned later.

The idea sounds simple: catch warning signs early. The reality feels messier. Mental health experts warn that sudden alerts could frighten parents without preparing them for difficult conversations. Privacy advocates worry about surveillance inside families. Teen users may feel watched rather than supported. And critics argue the platform still delivers harmful content while blaming parents for monitoring failures.

What’s Happening & Why This Matters

Meta Pushes Proactive Teen Monitoring

Instagram already blocks or hides content that glorifies self-harm. It moves upstream. Instead of reacting to posts, it monitors search habit patterns. If a teen repeatedly searches terms such as “suicide” or “self-harm,” the system flags it and notifies parents.

Meta says it avoids alert fatigue. A single search will not trigger warnings. Repetition within a short period will. Parents then receive a detailed message explaining what happened and where to find help resources.

The company also plans future alerts when teens discuss self-harm with Meta AI chat systems. That signals a new frontier: monitoring conversations with machines as emotional lifelines.

Meta sees the feature as another layer of defence, not a cure-all. Teen Accounts already apply strict restrictions by default for U16 users. The company says the new system detects sudden behavioural changes. In theory, it acts like a smoke detector for distress signals.

Critics Say Alerts May Do More Harm Than Good

Not everyone applauds. Advocacy groups worry the alerts may trigger fear without context. Andy Burrows of the Molly Rose Foundation calls the move “fraught with risk,” warning forced disclosures might worsen situations.

Ian Russell, whose daughter Molly died after exposure to harmful online content, expressed deep concern. He noted that a sudden message saying a child may be suicidal could send parents into panic mode rather than thoughtful support.

Charities also argue that the alerts ignore a deeper issue. Harmful content still circulates widely. Ged Flynn of Papyrus Prevention of Young Suicide says families do not want warnings after exposure. They want platforms to stop recommending dangerous material in the first place.

Another criticism cuts deeper. The feature shifts responsibility from platform design to parental oversight. If the system fails, blame falls at home rather than in Silicon Valley boardrooms.

Legal Pressure Drives Safety Features

Meta does not operate in a vacuum. Governments worldwide tighten rules on child safety online. Courts examine whether social platforms design addictive environments that harm minors. Recent trials place company leaders under oath about algorithmic influence on teen mental health.

The new alert system arrives during intense scrutiny. Lawmakers demand accountability. Parents demand transparency. Investors demand stability. Safety features are both moral gestures and legal armour.

Some observers interpret the move as preemptive. By introducing protective tools, Meta shows regulators it acts voluntarily. That strategy may reduce the risk of harsher laws or fines.

Privacy, Trust, and Teenage Autonomy

Teen users occupy a tricky space between childhood and independence. Surveillance may protect them. It may also erode trust. A teenager who knows every search triggers parental alerts may stop using official channels. They may move to encrypted platforms or anonymous spaces where help resources disappear.

Psychologists warn that secrecy can increase danger. If teens feel monitored rather than supported, they may hide their distress instead of seeking help. Healthy intervention depends on trust, not panic.

Sameer Hinduja of the Cyberbullying Research Centre notes that alerts alone are not enough. Parents need guidance immediately. Without it, fear replaces understanding.

Meta says it understands that risk. The company promises contextual resources with each alert. Whether families use them effectively is unknown.

AI Joins the Mental Health Equation

Another twist involves artificial intelligence. Teens increasingly turn to chatbots for advice. Some see AI as judgment-free support. Others worry about inaccurate or dangerous responses. Instagram plans to monitor conversations with its AI assistant for signs of crisis.

The development presents a more comprehensive trend. Social platforms are not limited to hosting conversations. They participate in them. When AI acts as a confidant, therapist, and companion, oversight questions multiply.

Families must navigate a world where emotional support may come from algorithms. Platforms must decide how deeply to intervene. Regulators must decide who bears responsibility when things go wrong.

TF Summary: What’s Next

Instagram’s parental alerts promise early detection of distress signs. They also raise thorny questions about privacy, responsibility, and trust. The feature may help some families intervene sooner. It may alarm others without providing enough context. The effectiveness will depend on how parents respond and whether exposure to harmful content actually declines.

MY FORECAST: Expect similar systems across the industry. Governments push harder. Public scrutiny intensifies. Platforms race to show they protect young users. The future likely holds deeper monitoring paired with blaring controversies about autonomy and digital childhood. Technology rarely solves human problems cleanly. It amplifies them, then offers tools to manage the fallout.