Keeping up with an industry as fast-moving as AI is a tall order. So until an AI can do it for you, here’s a handy roundup of recent stories in the world of machine learning, along with notable research and experiments we didn’t cover on their own.

This week, Meta released the latest in its Llama series of generative AI models: Llama 3 8B and Llama 3 70B. Capable of analyzing and writing text, the models are “open sourced,” Meta said — intended to be a “foundational piece” of systems that developers design with their unique goals in mind.

“We believe these are the best open source models of their class, period,” Meta wrote in a blog post. “We are embracing the open source ethos of releasing early and often.”

There’s only one problem: the Llama 3 models aren’t really “open source,” at least not in the strictest definition.

Open source implies that developers can use the models how they choose, unfettered. But in the case of Llama 3 — as with Llama 2 — Meta has imposed certain licensing restrictions. For example, Llama models can’t be used to train other models. And app developers with over 700 million monthly users must request a special license from Meta.

Debates over the definition of open source aren’t new. But as companies in the AI space play fast and loose with the term, it’s injecting fuel into long-running philosophical arguments.

Last August, a study co-authored by researchers at Carnegie Mellon, the AI Now Institute and the Signal Foundation found that many AI models branded as “open source” come with big catches — not just Llama. The data required to train the models is kept secret. The compute power needed to run them is beyond the reach of many developers. And the labor to fine-tune them is prohibitively expensive.

So if these models aren’t truly open source, what are they, exactly? That’s a good question; defining open source with respect to AI isn’t an easy task.

One pertinent unresolved question is whether copyright, the foundational IP mechanism open source licensing is based on, can be applied to the various components and pieces of an AI project, in particular a model’s inner scaffolding (e.g. embeddings). Then, there’s the mismatch between the perception of open source and how AI actually functions to overcome: open source was devised in part to ensure that developers could study and modify code without restrictions. With AI, though, which ingredients you need to do the studying and modifying is open to interpretation.

Wading through all the uncertainty, the Carnegie Mellon study does make clear the harm inherent in tech giants like Meta co-opting the phrase “open source.”

Often, “open source” AI projects like Llama end up kicking off news cycles — free marketing — and providing technical and strategic benefits to the projects’ maintainers. The open source community rarely sees these same benefits, and, when they do, they’re marginal compared to the maintainers’.

Instead of democratizing AI, “open source” AI projects — especially those from big tech companies — tend to entrench and expand centralized power, say the study’s co-authors. That’s good to keep in mind the next time a major “open source” model release comes around.

Here are some other AI stories of note from the past few days:

- Meta updates its chatbot: Coinciding with the Llama 3 debut, Meta upgraded its AI chatbot across Facebook, Messenger, Instagram and WhatsApp — Meta AI — with a Llama 3-powered backend. It also launched new features, including faster image generation and access to web search results.

- AI-generated porn: Ivan writes about how the Oversight Board, Meta’s semi-independent policy council, is turning its attention to how the company’s social platforms are handling explicit, AI-generated images.

- Snap watermarks: Social media service Snap plans to add watermarks to AI-generated images on its platform. A translucent version of the Snap logo with a sparkle emoji, the new watermark will be added to any AI-generated image exported from the app or saved to the camera roll.

- The new Atlas: Hyundai-owned robotics company Boston Dynamics has unveiled its next-generation humanoid Atlas robot, which, in contrast to its hydraulics-powered predecessor, is all-electric — and much friendlier in appearance.

- Humanoids on humanoids: Not to be outdone by Boston Dynamics, the founder of Mobileye, Amnon Shashua, has launched a new startup, Menteebot, focused on building bibedal robotics systems. A demo video shows Menteebot’s prototype walking over to a table and picking up fruit.

- Reddit, translated: In an interview with Amanda, Reddit CPO Pali Bhat revealed that an AI-powered language translation feature to bring the social network to a more global audience is in the works, along with an assistive moderation tool trained on Reddit moderators’ past decisions and actions.

- AI-generated LinkedIn content: LinkedIn has quietly started testing a new way to boost its revenues: a LinkedIn Premium Company Page subscription, which — for fees that appear to be as steep as $99/month — include AI to write content and a suite of tools to grow follower counts.

- A Bellwether: Google parent Alphabet’s moonshot factory, X, this week unveiled Project Bellwether, its latest bid to apply tech to some of the world’s biggest problems. Here, that means using AI tools to identify natural disasters like wildfires and flooding as quickly as possible.

- Protecting kids with AI: Ofcom, the regulator charged with enforcing the U.K.’s Online Safety Act, plans to launch an exploration into how AI and other automated tools can be used to proactively detect and remove illegal content online, specifically to shield children from harmful content.

- OpenAI lands in Japan: OpenAI is expanding to Japan, with the opening of a new Tokyo office and plans for a GPT-4 model optimized specifically for the Japanese language.

More machine learnings

Can a chatbot change your mind? Swiss researchers found that not only can they, but if they are pre-armed with some personal information about you, they can actually be more persuasive in a debate than a human with that same info.

“This is Cambridge Analytica on steroids,” said project lead Robert West from EPFL. The researchers suspect the model — GPT-4 in this case — drew from its vast stores of arguments and facts online to present a more compelling and confident case. But the outcome kind of speaks for itself. Don’t underestimate the power of LLMs in matters of persuasion, West warned: “In the context of the upcoming US elections, people are concerned because that’s where this kind of technology is always first battle tested. One thing we know for sure is that people will be using the power of large language models to try to swing the election.”

Why are these models so good at language anyway? That’s one area there is a long history of research into, going back to ELIZA. If you’re curious about one of the people who’s been there for a lot of it (and performed no small amount of it himself), check out this profile on Stanford’s Christopher Manning. He was just awarded the John von Neuman Medal; congrats!

In a provocatively titled interview, another long-term AI researcher (who has graced the TechCrunch stage as well), Stuart Russell, and postdoc Michael Cohen speculate on “How to keep AI from killing us all.” Probably a good thing to figure out sooner rather than later! It’s not a superficial discussion, though — these are smart people talking about how we can actually understand the motivations (if that’s the right word) of AI models and how regulations ought to be built around them.

The interview is actually regarding a paper in Science published earlier this month, in which they propose that advanced AIs capable of acting strategically to achieve their goals, which they call “long-term planning agents,” may be impossible to test. Essentially, if a model learns to “understand” the testing it must pass in order to succeed, it may very well learn ways to creatively negate or circumvent that testing. We’ve seen it at a small scale, why not a large one?

Russell proposes restricting the hardware needed to make such agents… but of course, Los Alamos and Sandia National Labs just got their deliveries. LANL just had the ribbon-cutting ceremony for Venado, a new supercomputer intended for AI research, composed of 2,560 Grace Hopper Nvidia chips.

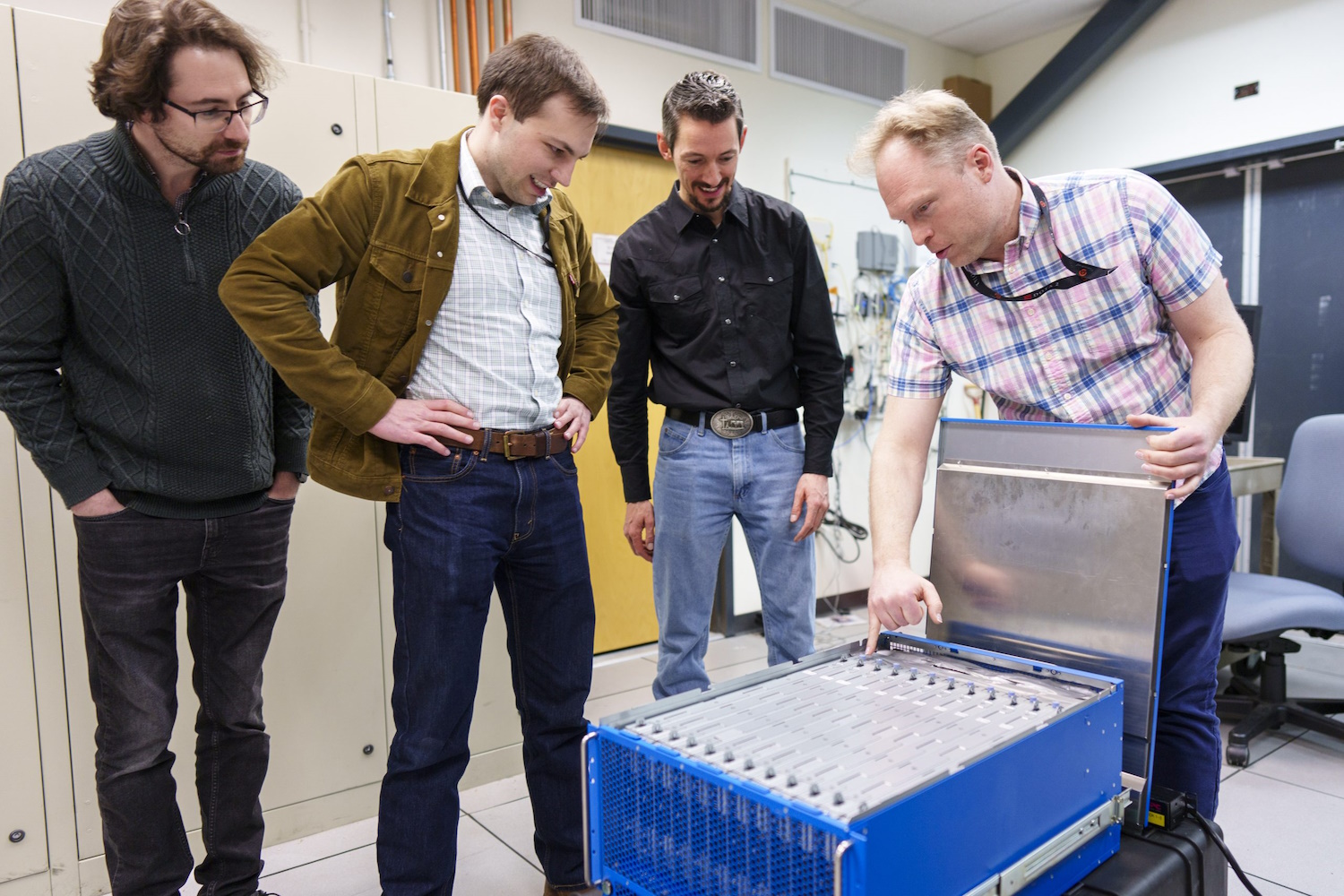

And Sandia just received “an extraordinary brain-based computing system called Hala Point,” with 1.15 billion artificial neurons, built by Intel and believed to be the largest such system in the world. Neuromorphic computing, as it’s called, isn’t intended to replace systems like Venado, but to pursue new methods of computation that are more brain-like than the rather statistics-focused approach we see in modern models.

“With this billion-neuron system, we will have an opportunity to innovate at scale both new AI algorithms that may be more efficient and smarter than existing algorithms, and new brain-like approaches to existing computer algorithms such as optimization and modeling,” said Sandia researcher Brad Aimone. Sounds dandy… just dandy!

Source: techcrunch.com