Police AI, federal takedowns, and commercial surveillance all collided this week — and none of it felt small.

Cybercrime news rarely arrives in tidy little boxes. The week proved it again. In the U.K., Essex Police paused live facial recognition deployments after officials flagged potential bias and accuracy risks. In the US, the FBI and the Department of Justice seized domains linked to an Iran-linked operation that claimed responsibility for a destructive cyberattack on Stryker. At the same time, FBI Director Kash Patel confirmed the bureau is again buying commercially available location data on Americans.

The stories look different on the surface. One centers on facial recognition bias. One centers on cyber disruption and foreign influence operations. One centers on data brokers and government surveillance. Yet they all point to the same blunt truth: modern cybercrime and digital policing are inside a wider contest over trust, rights, security, and who gets to collect what.

What’s Happening & Why This Matters

Essex Police Hit Pause After Facial Recognition Bias Concerns

The most immediate civil-liberties story came out of England. The Information Commissioner’s Office said Essex Police had already paused live facial recognition deployments before an ICO audit because the force identified “potential accuracy and bias risks.” The regulator added that it continues to work with Essex to make sure those risks are addressed.

That pause followed research commissioned by Essex and carried out by academics from the University of Cambridge. The fieldwork used 188 paid actors walking through a live deployment area in Chelmsford. The report found the system correctly identified around half of the people on a watchlist and produced very few incorrect identifications. Here’s the hard part: the system was statistically more likely to correctly identify Black participants than participants from other ethnic groups. Furthermore, the system was also more likely to correctly identify men than women.

Dr Matt Bland, one of the report’s authors, put the fairness issue in plain terms. He told The Guardian that if an offender passes the cameras set up as they were in Essex, “the chances of being identified as being on a police watchlist are greater if you’re black”. He added that the system “warrants further investigation.” That is not a casual footnote. That is the central issue. Bias in facial recognition does not only mean innocent people face more risk. It can mean surveillance pressure falls unevenly across communities.

Essex Police said the force paused deployments, worked with the software provider, revised policies and procedures, and believes it can begin using the technology again while continuing to monitor results. That response matters because it shows a force trying to move from “deploy first” to “measure, correct, then redeploy.” Still, critics are not impressed. Jake Hurfurt of Big Brother Watch told The Guardian, “AI surveillance that is experimental, untested, inaccurate or potentially biased has no place on our streets.” That line cuts to the core. In public-space policing, “close enough” is not good enough.

The story is at a tense moment for U.K. policing. The Guardian reported that the Home Office announced in January that the number of live facial recognition vans would increase fivefold, with 50 vans made available to every police force in England and Wales. So the Essex pause does not sit in isolation. It cuts across a much wider push to normalize real-time biometric policing. That means one local pause carries national weight.

FBI, DOJ Combact Iran-Linked Domains

The second story came with more hard edges. On 19 March, the Justice Department announced the seizure of four domains tied to Iran’s Ministry of Intelligence and Security. According to the DOJ, the domains were used for claiming hacks, posting stolen data, threats, and online psychological operations. The department said that one of the seized domains (Handala-hack[.]to) claimed credit for a March 2026 destructive malware attack against a U.S.-based multinational technology firm. The DOJ did not name the company in the press release, but reporting connected that claim to the Stryker cyberattack.

That matters because the attack did not appear like a routine data-theft stunt. Stryker said in customer updates that it suffered a global network disruption tied to a cyberattack affecting its Microsoft environment. The company said it had no indication of ransomware or malware and believed the incident was contained, but outside reporting described the campaign as data-wiping and disruptive.

The DOJ stated the domains as part of an Iranian cyber-enabled intimidation effort. The department said the seized infrastructure supported doxxing, psychological operations, and calls for violence against dissidents, journalists, and Israeli-associated targets. Attorney General Pamela Bondi said the takedown removed sites used to spread anti-American hate. FBI Director Kash Patel added, “We took down four of their operation’s pillars, and we’re not done.” The government’s message was direct: this is not a hack only. It was a pressure campaign built to frighten, inflame, and manipulate.

That distinction matters more than it used to. Cyber operations increasingly blend system disruption, information leaks, reputational harm, and real-world threats. The keyboard is no longer in its lane. A destructive attack on a company like Stryker carries business, operational, and narrative risks, simultaneously. Once threat actors start mixing data leaks with intimidation and propaganda, the incident stops being a simple breach story. It is a hybrid operation.

For defenders, that raises the bar. It is no longer enough to restore systems and patch the hole. Teams need to manage communications, trust, law-enforcement coordination, customer confidence, and sometimes even employee safety. The cyber battlefield includes inboxes, leak sites, public statements, and fear itself. Charming, really — like malware wearing a suit and carrying a megaphone.

Director Confirms FBI Is Buying Citizens’ Location Data… Again

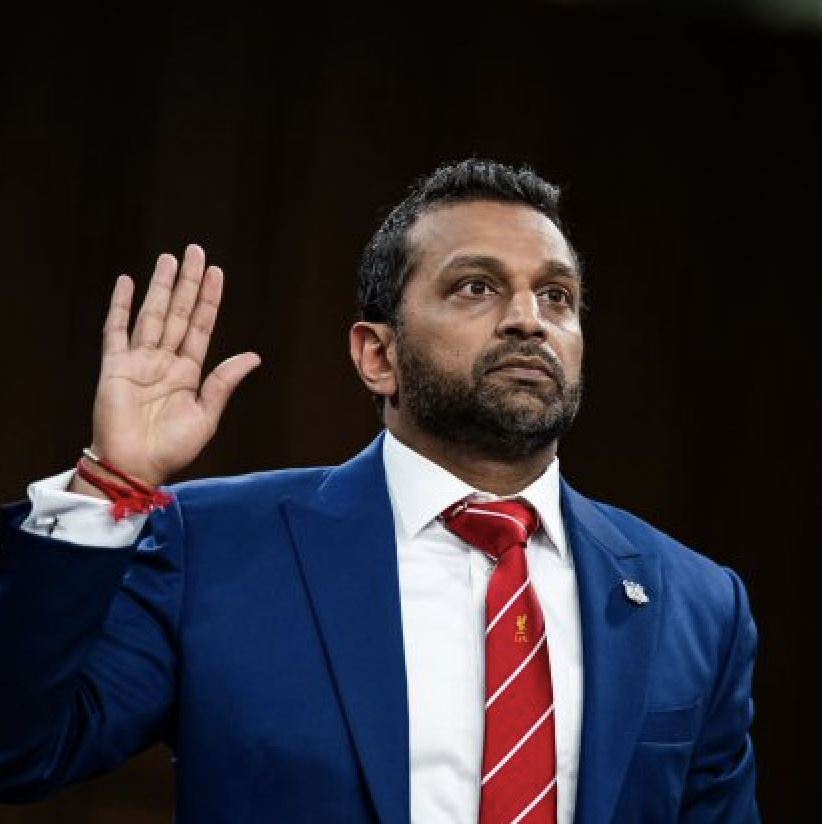

The third story may carry the widest long-term reach because it touches ordinary people directly. During an 18 March Senate Intelligence Committee hearing, FBI Director Kash Patel confirmed under oath that the FBI buys commercially available information, including location data, and said the practice is consistent with the Constitution and the Electronic Communications Privacy Act. He said the purchases have led to “valuable intelligence.”

That confirmation fell like a bomb because, in 2023, then-FBI Director Christopher Wray told the Senate that the bureau was not actively purchasing Americans’ location data. Patel’s testimony signaled a return to the practice. Reporting from The Verge, The Guardian, and Ars Technica tied the exchange to questions from Senator Ron Wyden, who has long argued that buying data from brokers lets agencies sidestep warrant protections that would apply if they sought the same information from phone companies.

The privacy concern is not abstract. Commercial location data can reveal where people live, where they sleep, where they worship, who they meet, what clinics they visit, and which protests they attend. Data brokers package that information because apps collect it; intermediaries resell it. Buyers with money can still get access. Once the government buys it, the Fourth Amendment debate starts all over again. The data may be “commercially available,” but the result can still feel like surveillance without a warrant.

Wyden’s concern, as reported across outlets, was that the bureau is buying its way around constitutional limits. Patel’s defense was that the bureau uses all lawful tools available. That clash captures the fight in one shot. Law enforcement sees utility. Civil-liberties advocates see a loophole. The public gets stuck in the middle, carrying phones that quietly generate the market in the first place.

This issue connects back to the first two stories. Facial recognition depends on data and governance. Foreign cyber campaigns weaponize stolen data and leaked identity details. Brokered location data shows how much sensitive information already moves through commercial channels before a hacker or government actor ever touches it. In other words, the modern cybercrime story does not start with the breach. It often starts with the existing data.

TF Summary: What’s Next

This week’s cybercrime round-up showed three versions of the same digital problem. In Essex, officials confronted the risk that public-facing AI policing can work unevenly across groups. In the U.S., federal agencies knocked out domains tied to an Iran-linked intimidation and propaganda campaigns. At the same time, the FBI confirmed it is buying commercial location data again, reopening a fierce privacy debate around surveillance by purchase.

MY FORECAST: 2026 will bring tougher fights over facial recognition bias, state-linked cyber disruption, and commercial data surveillance. Expect more audits, more courtroom pressure, more calls for warrant rules, and more effort to regulate AI policing before it is ordinary street furniture. The big question will not be whether the tools work. The big question will be whether democratic systems can control them before convenience starts winning every argument.

— Text-to-Speech (TTS) provided by gspeech | TechFyle