Join us in returning to NYC on June 5th to collaborate with executive leaders in exploring comprehensive methods for auditing AI models regarding bias, performance, and ethical compliance across diverse organizations. Find out how you can attend here.

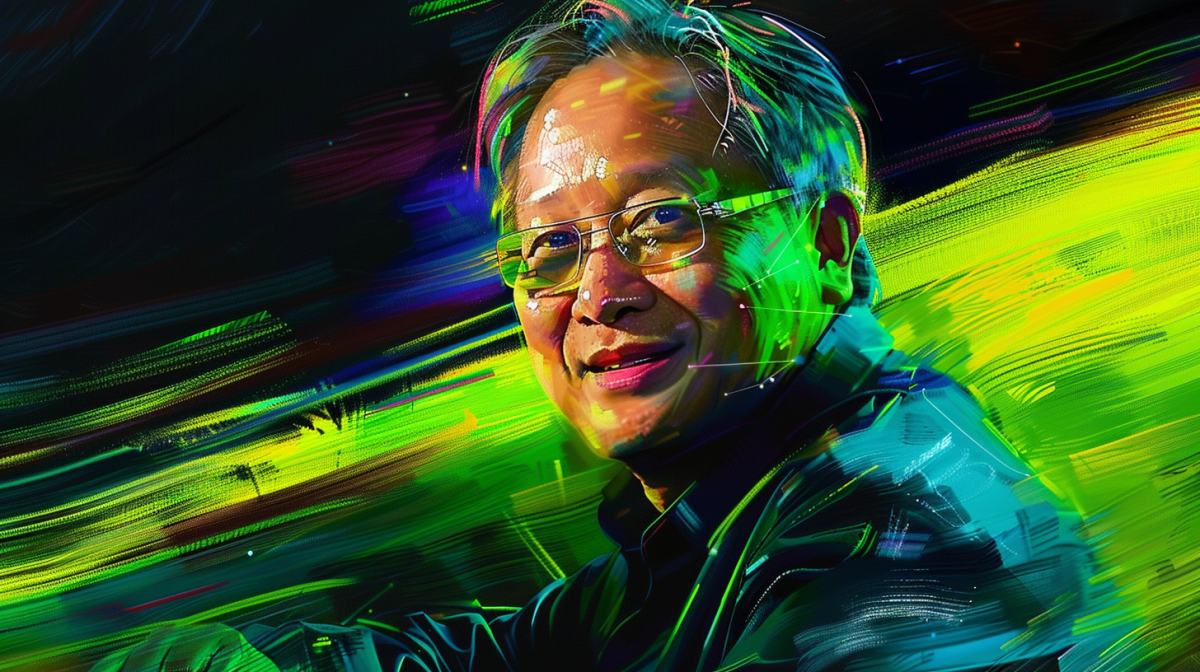

In its Q1 2025 earnings call on Wednesday, Nvidia CEO Jensen Huang highlighted the explosive growth of generative AI (GenAI) startups using Nvidia’s accelerated computing platform.

“There’s a long line of generative AI startups, some 15,000, 20,000 startups in all different fields from multimedia to digital characters, design to application productivity, digital biology,” said Huang. “The moving of the AV industry to Nvidia so that they can train end-to-end models to expand the operating domain of self-driving cars—the list is just quite extraordinary.”

Huang emphasized that demand for Nvidia’s GPUs is “incredible” as companies race to bring AI applications to market using Nvidia’s CUDA software and Tensor Core architecture. Consumer internet companies, enterprises, cloud providers, automotive companies and healthcare organizations are all investing heavily in “AI factories” built on thousands of Nvidia GPUs.

The Nvidia CEO said the shift to generative AI is driving a “foundational, full-stack computing platform shift” as computing moves from information retrieval to generating intelligent outputs.

“[The computer] is now generating contextually relevant, intelligent answers,” Huang explained. “That’s going to change computing stacks all over the world. Even the PC computing stack is going to get revolutionized.”

To meet surging demand, Nvidia began shipping its H100 “Hopper” architecture GPUs in Q1 and announced its next-gen “Blackwell” platform, which delivers 4-30X faster AI training and inference than Hopper. Over 100 Blackwell systems from major computer makers will launch this year to enable wide adoption.

Huang said Nvidia’s end-to-end AI platform capabilities give it a major competitive advantage over more narrow solutions as AI workloads rapidly evolve. He expects demand for Nvidia’s Hopper, Blackwell and future architectures to outstrip supply well into next year as the GenAI revolution takes hold.

Struggling to keep up with demand for AI chips

Despite the record-breaking $26 billion in revenue Nvidia posted in Q1, the company said customer demand is significantly outpacing its ability to supply GPUs for AI workloads.

“We’re racing every single day,” said Huang regarding Nvidia’s efforts to fulfill orders. “Customers are putting a lot of pressure on us to deliver the systems and stand them up as quickly as possible.”

Huang noted that demand for Nvidia’s current flagship H100 GPU will exceed supply for some time even as the company ramps production of the new Blackwell architecture.

“Demand for H100 through this quarter continued to increase…We expect demand to outstrip supply for some time as we now transition to H200, as we transition to Blackwell,” he said.

The Nvidia CEO attributed the urgency to the competitive advantage gained by companies that are first to market with groundbreaking AI models and applications.

“The next company who reaches the next major plateau gets to announce a groundbreaking AI, and the second one after that gets to announce something that’s 0.3% better,” Huang explained. “Time to train matters a great deal. The difference between time to train that is three months earlier is everything.”

As a result, Huang said cloud providers, enterprises, and AI startups feel immense pressure to secure as much GPU capacity as possible to beat rivals to milestones. He predicted the supply crunch for Nvidia’s AI platforms will persist well into 2024.

“Blackwell is well ahead of supply and we expect demand may exceed supply well into next year,” Huang stated.

Nvidia GPUs are delivering compelling returns for cloud AI hosts

Huang also provided details on how cloud providers and other companies can generate strong financial returns by hosting AI models on Nvidia’s accelerated computing platforms.

“For every $1 spent on Nvidia AI infrastructure, cloud providers have an opportunity to earn $5 in GPU instance hosting revenue over four years,” Huang stated.

Huang provided the example of a language model with 70 billion parameters using Nvidia’s latest H200 GPUs. He claimed a single server could generate 24,000 tokens per second and support 2,400 concurrent users.

“That means for every $1 spent on Nvidia H200 servers at current prices per token, an API provider [serving tokens] can generate $7 in revenue over four years,” Huang said.

Huang added that ongoing software improvements by Nvidia continue to boost the inference performance of its GPU platforms. In the latest quarter, optimizations delivered a 3X speedup on the H100, enabling a 3X cost reduction for customers.

Huang asserted that this strong return on investment is fueling breakneck demand for Nvidia silicon from cloud giants like Amazon, Google, Meta, Microsoft and Oracle as they race to provision AI capacity and attract developers.

Combined with Nvidia’s unmatched software tools and ecosystem support, he argued these economics make Nvidia the platform of choice for GenAI deployments.

Nvidia making aggressive push into ethernet networking for AI

While Nvidia is best known for its GPUs, the company is also a major player in datacenter networking with its Infiniband technology.

In Q1, Nvidia reported strong year-over-year growth in networking, driven by Infiniband adoption.

However, Huang emphasized that Ethernet is a major new opportunity for Nvidia to bring AI computing to a wider market. In Q1, the company began shipping its Spectrum-X platform, which is optimized for AI workloads over Ethernet.

“Spectrum-X opens a brand new market to Nvidia networking and enables Ethernet-only datacenters to accommodate large-scale AI,” said Huang. “We expect Spectrum-X to jump to a multi-billion dollar product line within a year.”

Huang said Nvidia is “all-in on Ethernet” and will deliver a major roadmap of Spectrum switches to complement its Infiniband and NVLink interconnects. This three-pronged networking strategy will allow Nvidia to target everything from single-node AI systems to massive clusters.

Nvidia also began sampling its 51.2 terabit per second Spectrum-4 Ethernet switch during the quarter. Huang said leading server makers like Dell are embracing Spectrum-X to bring Nvidia’s accelerated AI networking to market.

“If you invest in our architecture today, without doing anything, it will go to more and more clouds and more and more datacenters, and everything just runs,” assured Huang.

Record Q1 results driven by data center and gaming

Nvidia delivered record revenue of $26 billion in Q1, up 18% sequentially and 262% year-over-year, significantly surpassing its outlook of $24 billion.

The Data Center business was the primary driver of growth, with revenue soaring to $22.6 billion, up 23% sequentially and an astonishing 427% year-over-year. CFO Colette Kress highlighted the incredible growth in the data center segment:

“Compute revenue grew more than 5X and networking revenue more than 3X from last year. Strong sequential data center growth was driven by all customer types, led by enterprise and consumer internet companies. Large cloud providers continue to drive strong growth as they deploy and ramp Nvidia AI infrastructure at scale.”

Gaming revenue was $2.65 billion, down 8% sequentially but up 18% year-over-year. This was in line with Nvidia’s expectations of a seasonal decline. Kress noted, “The GeForce RTX SUPER GPU market reception is strong, and end demand and channel inventory remain healthy across the product range.”

Professional Visualization revenue was $427 million, down 8% sequentially but up 45% year-over-year. Automotive revenue reached $329 million, growing 17% sequentially and 11% year-over-year.

For Q2, Nvidia expects revenue of approximately $28 billion, plus or minus 2%, with sequential growth anticipated across all market platforms.

Nvidia stock was up 5.9% after hours to $1,005.75 after the company announced a 10:1 stock split.

Important Disclosure: The author owns securities of Nvidia Corporation (NV

Source: venturebeat.com