Nvidia is building faster AI hardware on Earth while quietly testing how far its ambitions can travel above it.

Nvidia has unveiled two very different bets at once. One chip targets faster chatbot performance in data centres. The other targets orbit itself. The first is commercial and immediate. The second is the opening salvo in a stranger, riskier race.

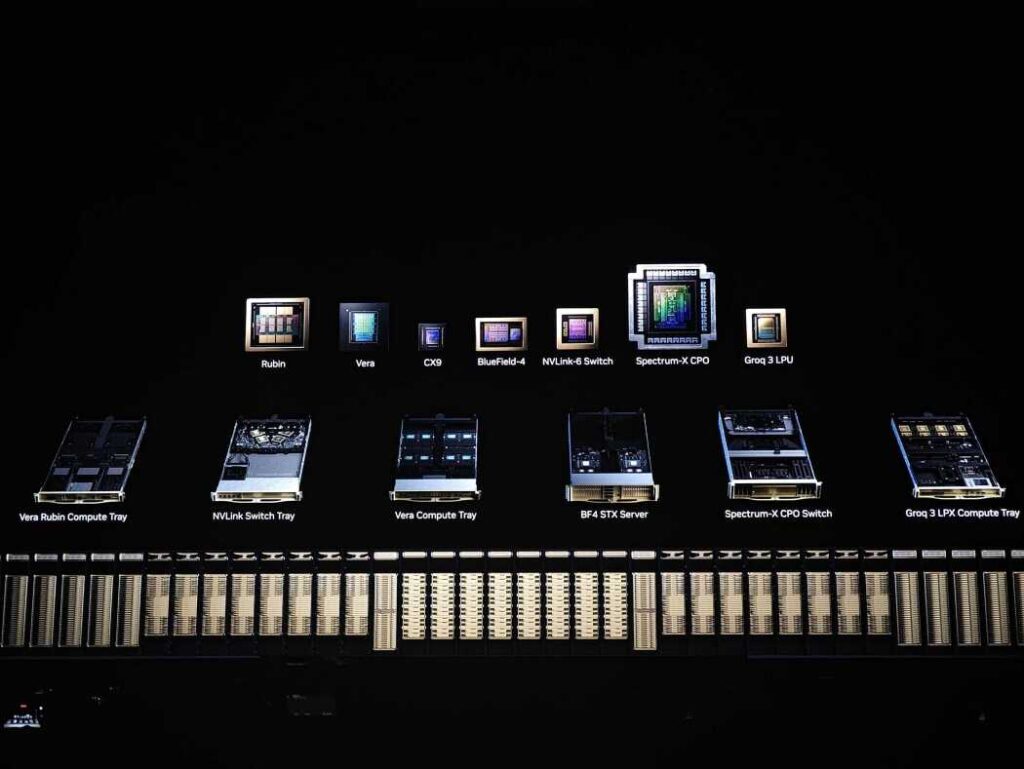

At GTC, Nvidia introduced the Groq 3 LPU to speed large language models. It revealed the Vera Rubin Space Module, a chip built for space-based data processing. Put together, the announcements show Nvidia chasing the next layer of AI infrastructure. It wants better performance for chatbots. It further wants a foothold in any future in which data centres leave the planet.

What’s Happening & Why This Matters

A New LPU to Urge Chatbots Faster

Nvidia says the new Groq 3 LPU is optimised for large language models. The chip comes from Nvidia’s licensing deal with California AI company Groq, founded in 2016. Nvidia wants the LPU to work alongside its next-generation Vera Rubin platform, which includes Rubin GPUs and Vera CPUs for data centers.

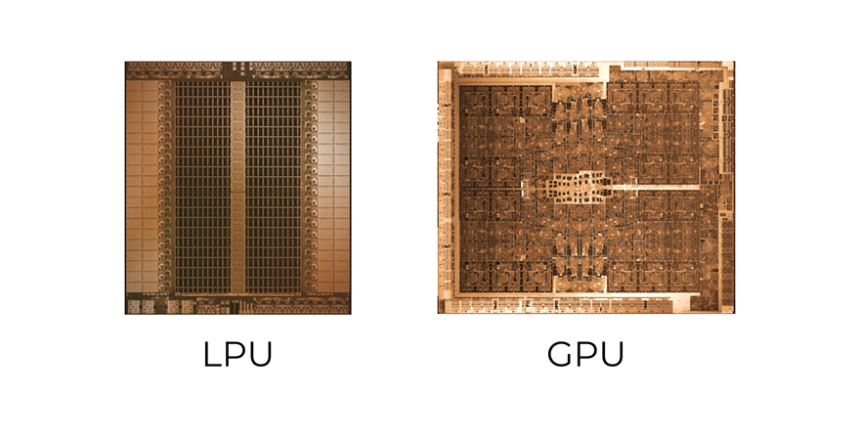

The technical pitch is simple. Groq’s LPU uses SRAM instead of HBM. SRAM is faster. It is much smaller. A single Groq 3 LPU contains 500MB of SRAM. Nvidia’s upcoming Rubin GPU will feature 288GB of HBM4 memory. To offset that gap, Nvidia plans to sell large groups of LPUs together.

Nvidia says an LPX rack with 256 LPU processors provides 128GB of on-chip SRAM and 640 TB/s of scale-up bandwidth. Deployed with Vera Rubin NVL72, Rubin GPUs and LPUs can jointly compute every model layer for every output token. The company says the combined system can deliver up to a 35x throughput increase on a 1 trillion-parameter model.

That matters because Nvidia is defending its crown on two fronts. It faces competition from alternative chips. It faces pressure to make chatbot inference cheaper and faster. If LPUs help customers handle long prompts more effectively, Nvidia retains more control over the AI stack.

Nvidia: The New LPU Is in Production Now

The LPU is not a lab toy. CEO Jensen Huang said, “We’re in production with the Groq chip,” and added that it will likely ship in Q3. Nvidia has contracted Samsung to manufacture it. One analyst expects Nvidia to ship 4 million to 5 million LPUs through 2026 and 2027.

The chips will likely cost tens of thousands of dollars per unit, putting them well out of reach for consumers. That makes the buyer list predictable. Think OpenAI, Anthropic, Meta, and other heavy AI operators. Those companies need inference speed, scale, and lower per-query friction. Nvidia wants to keep being the company they call first.

This is about control beyond the GPU. Nvidia already dominates training hardware. It wants more leverage over inference architecture, too. A mixed system of LPUs and GPUs gives Nvidia another way to stay essential even as the market fragments around custom silicon.

And yes, the company is playing both offence and defence at once. Faster chatbot performance is the sales pitch. Harder-to-replace infrastructure is the deeper strategy.

A Space Module Is the Stranger, Future Bet

The second announcement is much weirder and much more revealing. Nvidia introduced the Vera Rubin Space Module, a chip built to survive space and run AI models from orbit. The company says the module can process data streams from “space-based instruments in real time,” enabling “on-orbit analytics, autonomous scientific discovery and rapid insight generation.” It even promises up to a 25x performance leap over the H100 GPU introduced in 2022.

Nvidia did not give a launch date. It did not pretend that the economy is great today. Huang said on a recent earnings call, “Well, the economics are poor today, but it is going to improve over time.” He said GPUs in space may work especially well for tasks such as high-resolution satellite imaging. A GPU on a satellite can process imagery faster than routing everything back to Earth first.

That is the practical version of the idea. Nvidia is not yet promising orbital ChatGPT for everyone. It is aiming first at satellite imaging, scientific workloads, and specialised autonomous processing in orbit. That is still a large step. It turns space AI from a speculative talking point into a product roadmap.

The harder engineering problem is cooling. Huang noted that space has no air, which complicates thermal management. That single sentence explains why orbital data centres still sound like science fiction wearing a badge.

The Race for Space Data Centres Is Early… and On

Nvidia’s partners tell you why this matters. The company says it is working on the Vera Rubin Space Module with Aetherflux, Axiom Space, Planet Labs, and StarCloud. Those names tie the chip to space stations, satellite imaging, orbital power ideas, and early data-centre infrastructure in space.

The file adds two more important details. StarCloud launched an Nvidia H100 into space last November using a test satellite. It then successfully connected to the GPU and ran AI models on it. StarCloud has since filed a request to launch up to 88,000 satellites. Meanwhile, SpaceX has filed a regulatory request to operate up to 1 million satellites to support an orbital data-centre project. Elon Musk has argued that space-based data centres may eventually beat terrestrial ones on cost and efficiency.

That does not mean orbital AI infrastructure is close to normal. It does mean the idea has moved beyond cocktail-napkin futurism. Nvidia even began recruiting for an Orbital Datacenter System Architect just days after unveiling the space module.

That hiring signal matters. Companies do not usually recruit for fantasy. They recruit for categories they think might become real enough to justify early advantage.

TF Summary: What’s Next

Nvidia’s latest moves split its AI ambitions into two lanes. The Groq 3 LPU is the near-term commercial play. It aims to speed chatbot inference, improve long-prompt performance, and keep Nvidia central to next-generation data centres. The Vera Rubin Space Module is the longer, stranger play. It targets real-time processing from orbit, with partners already testing Nvidia hardware above Earth.

MY FORECAST: Nvidia will likely win near-term attention with LPUs because the customer need is obvious and the shipping window is near. The space module will take longer. It may start with imaging, science, and defence-adjacent workloads before anything emerges. Still, Nvidia is planting a flag early. If orbital compute is even modestly practical, the company wants to be there first with a chip, a partner map, and a hiring pipeline already in place.

— Text-to-Speech (TTS) provided by gspeech | TechFyle