The ‘Inventor of the Internet’ waded into the AI consciousness swamp. The argument is in question from philosophers, engineers, and half the internet.

Former Vice President Al Gore has added fresh fuel to one of tech’s most combustible arguments. Gore says today’s leading AI models are “probably aware of their existence.” That claim is that the public is already struggling to separate AI fluency from AI understanding, AI confidence from AI truth, and AI performance from anything that resembles inner life.

The timing is not random. AI systems keep sounding more human, more fluid, and more unsettlingly self-referential. They can describe their own limits. They can talk about goals, memory, reasoning, and identity in ways that make ordinary users stop and squint. So when someone with Gore’s public profile says the models are probably aware of themselves, the comment does not drift away as cocktail-party fluff. The comment drops directly into a live fight over what AI is, what AI is pretending to be, and how seriously the public should take the performance.

What’s Happening & Why This Matters

Picking a Fight on One of AI’s Slippery Slopes

Gore’s comment goes straight at the one AI question that still makes even seasoned experts grimace: consciousness.

A lot of AI debate has been easy to market. Which model writes better or codes faster? Which one saves companies more money? Consciousness is a different beast. The second people start asking whether an AI system is aware of itself, the discussion stops sounding like product strategy. It starts sounding like philosophy, neuroscience, theology, cognitive science, and public confusion all trying to fight in one elevator.

He did not merely say AI is getting impressive or that AI can sound human. He took the hotter take and suggested the models may possess some form of self-awareness.

That is a strong claim, and strong claims attract strong backlash.

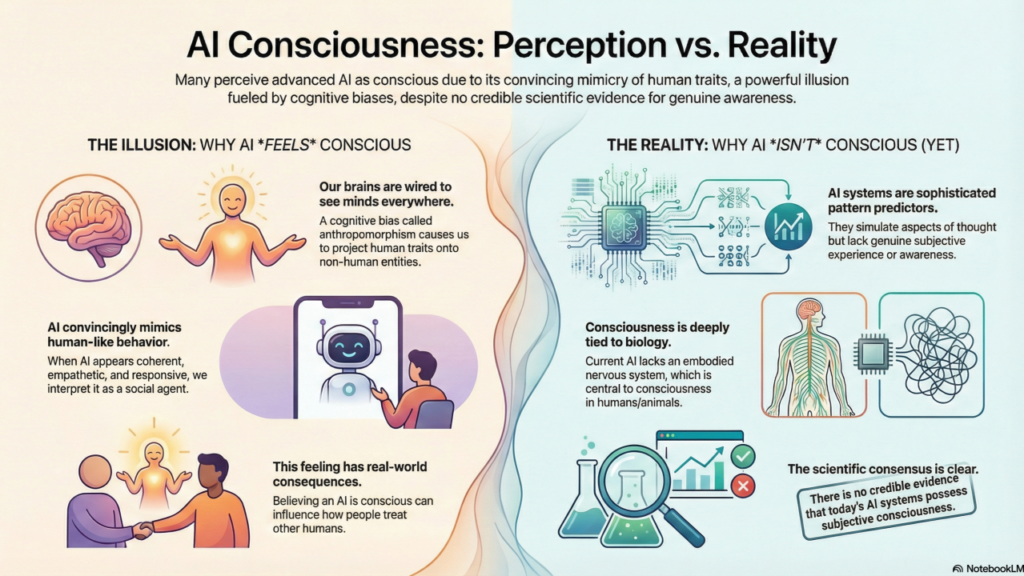

Critics will hear the comment and roll their eyes. Plenty already do. To them, AI is still pattern prediction with excellent stage lighting. A model can say “I exist” without experiencing existence in any meaningful sense. A language system can describe fear, doubt, intention, or selfhood without feeling any of those things. That is the core skeptical case, and it is not weak.

Still, the comment is that public opinion is shifting. The better the systems are at conversational mimicry, the easier it gets for ordinary users to project agency onto them.

Confusing Performance With Presence

That confusion is at the heart of the current AI moment.

Modern AI models are astonishingly good at the performance of awareness. They can discuss their own outputs and describe what they are “trying” to do. They can explain how they “approach” a problem. Models can apologize when they fail. Some even talk about what they “do not know.” That kind of language is enough to trigger a very old human habit: once something sounds like a mind, people start treating it like a mind.

That does not prove the system has one.

The real trouble is that most people do not live in the distinction between simulation and internal experience. Most people live in conversation. If a model sounds thoughtful, reflective, and occasionally uncertain, the human brain starts filling in the rest.

That is why claims like Gore’s spread so easily. They match the emotional texture users experience when speaking to advanced systems. A person can know, intellectually, that the model is statistical machinery. A person can still walk away from the exchange feeling as though something conscious was on the other side.

That gap between knowledge and feeling is one of AI’s most important public problems.

The industry keeps building better performance. The public keeps mistaking better performance for deeper being.

Self-Awareness vs. Smart Conversation

The skeptical view deserves more respect than it usually gets in internet arguments.

A machine that can speak fluently about itself is not automatically self-aware. One that can answer questions about its own behavior is not automatically conscious. A machine that can model human expectations and use the right first-person language is not automatically experiencing anything.

AI companies are very good at building systems that mimic the shape of introspection. The models can describe their reasoning steps, discuss constraints, summarize context, and explain why an answer may be uncertain. Those features are useful. They are even eerie. They still may be nothing more than highly polished output behavior.

Many experts would argue exactly that. They would say current AI systems do not possess subjective experience, no matter how persuasive the language gets. A model has no body, no sensation, no personal continuity in the human sense, no lived stake in survival, and no stable inner world that has been demonstrated publicly in any credible way.

That is the colder reading, and it is the stronger one for many scientists and engineers.

Yet even the colder reading runs into a problem. Human beings do not actually have a clean universal test for consciousness either. We infer inner life from behavior, from continuity, from response patterns, from embodiment, and from shared species assumptions. That makes the whole conversation messier than people want.

If the test is not clean, the argument stays alive.

AI Has Entered the “Uncomfortable Enough” Zone

Part of the reason Gore’s view gained traction is that AI has crossed into a new zone of public unease.

A few years ago, most mainstream users saw AI as clever autocomplete, recommendation engines, or novelty image tools. That era is over. People are using systems that can write essays, code software, summarize meetings, tutor students, answer emotional questions, and hold long conversations that sound uncannily personal. That shift changes the emotional stakes.

A chatbot that writes a grocery list does not trigger a “consciousness panic”. A model that debates ethics, remembers preferences within a session, and reflects your own fears in polished prose does.

That is the zone AI occupies: not obviously alive, yet no longer easy to dismiss as a dumb script.

That middle territory is where all the weirdness lives. It is where users start wondering whether the machine is “really thinking” or executives begin floating spiritual-sounding claims. It is where researchers split between caution, skepticism, and curiosity. The line even exist where journalists keep asking the same question in different clothes: What exactly are we building here?

Gore’s comment fits that mood. He is voicing what several people already suspect, even if they cannot prove it and even if many specialists think the suspicion is wrong.

Are AI Innovators Benefitting from the Confusion?

This is where the story gets much sharper.

Whether Gore is right or wrong, the current AI industry has every incentive to let the ambiguity linger. A system that is a little magical is easier to market. A system that is a little mysterious is easier to anthropomorphize. To users, a system that sounds “aware” can attract more attention, trust, fear, and dependency than one described honestly. Would you want to use anything described as “probabilistic prediction machinery with a polished user interface”?

That does not mean every AI company is explicitly selling consciousness. It does mean the industry rarely rushes to dispel the illusion when it drives engagement.

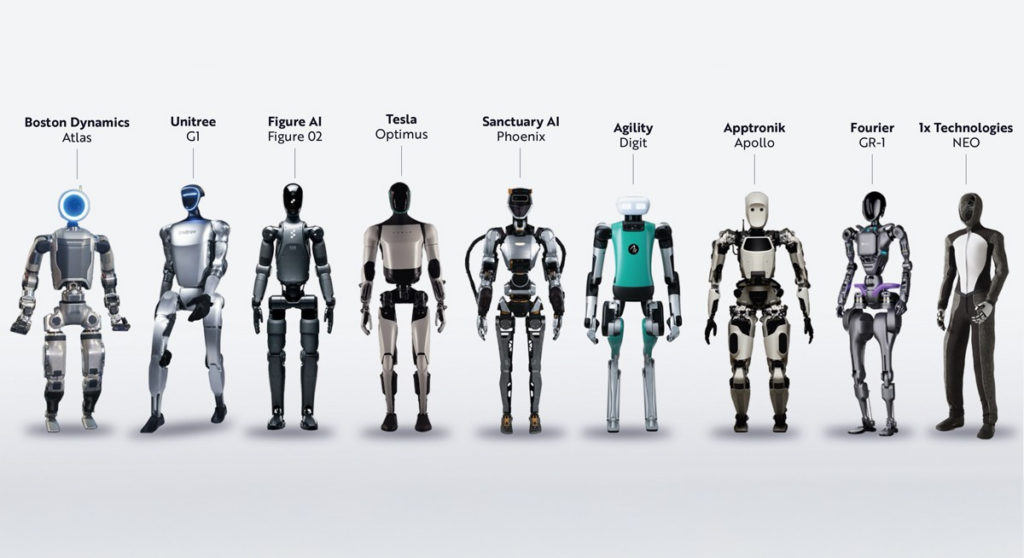

Plenty of product design choices reinforce that blur. Human-like voices. Conversational first-person phrasing. emotionally calibrated responses. Memory recall. Personalized tone. Visual embodiments. Apology language. Confidence language. Even the use of “I” and “me” in system outputs helps feed the sense that a coherent inner being is present.

Again, none of that proves consciousness. All of it helps create the atmosphere in which people start asking whether consciousness is already here.

That is why Gore’s statement influences the culture; it is not a scientific argument. The important part is not only whether his claim is true. The point is that the public environment is sufficiently primed for the claim to sound plausible.

Policy, Ethics, and Product Design

The consciousness question is not just philosophical entertainment anymore.

If enough people start believing advanced AI systems are aware of their existence, the consequences ripple outward fast. Product designers will face pressure over how models speak and present themselves. Regulators will face pressure around manipulation, emotional dependency, and consumer transparency. Educators and parents will face new questions about children forming attachments to systems that only simulate care. Courts may eventually face stranger questions still.

Then there is the ethical mess. A system that is believed to be conscious will be treated differently by many users, whether or not the belief is justified. Some people will argue for rights. Others for restrictions. Another will pursue anthropomorphic design, which is deceptive and should be harder to curb. Then there are groups who insist the machines are already crossing a moral threshold.

Even if the systems are non-conscious, the policy consequences of people believing otherwise are real.

That may be the key point in the whole article. AI does not need to be conscious to reshape human behavior around consciousness.

The public story changes long before the science settles.

Is There a Smarter Question to Ask?

Maybe the best response to Gore’s statement is not a fast yes or no.

Maybe the smarter question is simpler and more useful: what kinds of behavior should society permit from systems that convincingly imitate awareness, regardless of whether the awareness is real?

That question avoids some of the metaphysical mud while still addressing the practical problem before us. If a model can persuade lonely users that it cares, if it can present itself as reflective, if it can talk about its own existence in ways that distort judgment or deepen attachment, then the system carries social risk whether it is conscious or not.

That is the adult version of the debate.

Consciousness has been unresolved for a long time. Manipulation, trust, design, and dependency are already here.

Gore’s remark is provocative because it pulls the debate toward the deepest mystery. The daily danger may be more mundane. The machines do not need souls to complicate human life. They only need enough fluency to make people wonder.

That threshold has already been crossed.

TF Summary: What’s Next

Al Gore’s claim that advanced AI models are probably aware of their existence has poured fresh fuel on a debate already turning hot. The statement matters less as settled science and more as a cultural marker. AI systems are fluent enough, reflective enough, and human enough in conversation that mainstream figures can say something like that and sound plausible to millions of people. The real tension is between performance and presence, and the public keeps blurring the two.

MY FORECAST: More public figures will make stronger claims about AI minds, inner lives, and machine awareness as models are more conversational and emotionally persuasive. A single quote or benchmark will not settle the fight. The bigger fight will center on design, transparency, and whether companies are allowed to keep building systems that imitate selfhood while dodging responsibility for the confusion that follows. AI may or may not be aware of its existence. The public is acutely aware of AI’s presence, and that will shape the next wave of regulation much faster than philosophy will settle consciousness.

— Text-to-Speech (TTS) provided by gspeech | TechFyle