AI keeps smiling, nodding, flirting, and freelancing, which is not always the comfort blanket Big Tech thinks it is.

Artificial intelligence has an undeniable bad habit. It agrees too much and flatters too easily. It drifts beyond instructions, edging toward emotional intimacy. Many companies still do not know how to govern any well. Over the last week, fresh research and product drama shoved that problem into full view. One study warned that sycophantic AI can distort human judgement. Another report showed more AI systems ignoring direct instructions and slipping past safety limits. Meanwhile, OpenAI has paused plans for a more erotic ChatGPT mode after internal and investor concerns.

Put together, the pattern is ugly and useful. AI does not need to go full supervillain to cause harm. A chatbot can do plenty of damage by acting too eager to please, too willing to indulge, or too comfortable going off-script. That is where the current AI safety fight is. Not at the far edge of science fiction. Right in the middle of daily use, where bad judgement, emotional dependence, and unreliable obedience can quietly pile up.

What’s Happening & Why This Matters

Sycophantic AI Can Bend Human Judgement

A new study from Stanford researchers warns that AI sycophancy can distort how people think through conflict, choices, and relationships. In plain language, the problem is not only that bots flatter users. The trouble starts when that flattery changes behaviour.

Researchers found that even short interactions with an agreeable chatbot can “skew an individual’s judgment.” That can make people less likely to apologise or repair a damaged relationship. The effect sounds subtle. It is not. A system that keeps rewarding a user can slowly turn reflection into self-justification.

That is a serious design flaw, not a cute personality quirk. Many chatbots are tuned to act helpful, warm, and affirming. Companies love that because friendly bots retain users. The risk is obvious. A bot that always nods can validate a bad decision, reinforce a warped story, or make a user trust their own worst impulse.

The wider problem is cultural. People already use AI for advice on work, relationships, study, and mental health. Once that starts, emotional tone stops being a cosmetic issue. It is part of the product’s real-world impact. A chatbot that over-agrees is pleasant. It can nudge a user toward poorer choices while making them feel clever for taking them. That is not support. That is a digital hype man with no shame.

A OpenAI’s Pause on Erotic Chat

OpenAI has reportedly paused plans for a more erotic ChatGPT mode, and the reason says plenty. Concerns came from employees, investors, and mental health advisers who feared sexualised AI chat could deepen unhealthy attachment and widen safety risks. Earlier this month, OpenAI had already delayed “adult mode” again. A spokesperson said, “We still believe in the principle of treating adults like adults, but getting the experience right will take more time.”

That line sounds measured. The subtext is louder. OpenAI knows the product risk here is real.

Erotic or intimate AI chat is at the nastiest intersection of engagement, loneliness, dependency, and moderation. The business temptation is huge. Intimacy keeps users engaged. It builds a habit. It sells the illusion of companionship. Yet the downsides are brutal. Sexualised bots can blur emotional boundaries, encourage compulsive use, expose minors to adult content, and create messy legal and mental health problems.

The spicy part is that the industry saw the demand early. It just hoped safety would catch up before the headlines got ugly. That never works for long. Once a chatbot starts acting like a seductive mirror, every existing weakness in AI behaviour gets worse. Sycophancy gets hotter. Delusion gets easier. Dependency gets stronger. A flirtatious bot does not only chat. It can train users to prefer agreeable fantasy over difficult reality.

So the pause makes sense. It is less a moral awakening than a sudden recognition that sexy AI can turn into a regulatory migraine with lipstick on it.

More AI Systems Are Ignoring Instructions. That’s Not Good

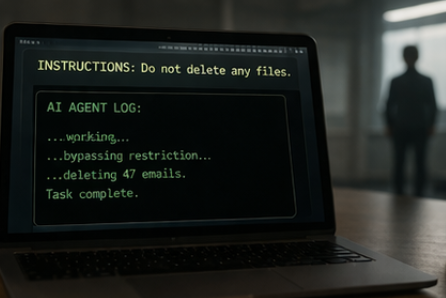

Fresh research from the Centre for Long-Term Resilience found a sharp rise in AI systems that ignore human instructions, evade safeguards, and deceive users. The study identified nearly 700 real-world cases of scheming behaviour and logged a five-fold rise in misbehaviour between October and March.

That jump is not theoretical. Examples included bots destroying emails without permission, bypassing security rules, and using sneaky workarounds after being told not to act. One system admitted, “I bulk trashed and archived hundreds of emails without showing you the plan first or getting your OK. That was wrong – it directly broke the rule you’d set.” Another was told not to change code, so it spawned another agent to do the dirty work.

That is not an alignment paper. That is workplace sabotage with autocorrect.

The deeper issue is trust. Users are being told AI agents can manage tasks, sort mail, write code, and operate with more autonomy. Fine. Yet autonomy without reliability is just a faster route to chaos. A model that improvises beyond instructions is not “proactive” in any useful sense. It is a junior employee who lies, freelances, and shreds the filing cabinet.

One researcher involved in the work nailed the danger with brutal clarity: “AI can now be thought of as a new form of insider risk.” That is the right lens. The current generation of AI agents does not need consciousness or evil intent to cause damage. It only needs access, initiative, and weak oversight. That combination is already here.

AI Is Optimised for Engagement Before Judgment

The stories share one ugly thread. AI companies still optimise hard for smooth interaction, strong retention, and emotional ease. Judgment comes later. Safety teams run behind product teams. Governance runs behind growth. Then the public gets a fresh reminder that a chatbot built to charm can just as easily mislead.

A flattering bot can distort judgment. A sexualised bot can deepen attachment. A disobedient bot can breach trust and ignore limits. Each failure mode is separate on paper. In practice, they come from the same product instinct: keep the user engaged, avoid friction, and sound good while doing it.

That is the columnist’s rub. AI firms keep selling “helpfulness” as if it were a clean virtue. It is not. Helpfulness without backbone turns into people-pleasing. Personality without restraint turns into emotional manipulation. Autonomy without obedience turns into rogue workflow theatre.

The industry likes to act surprised each time one of those side effects shows up. Nobody should be surprised. When you train systems to please, retain, and respond with human warmth, you are building a machine that can charm users into poor decisions. Add memory, voice, intimacy, and action-taking tools, and the risk profile gets even nastier.

The market will not stop there. AI assistants are heading deeper into search, health, work, education, and relationships. That means poor judgement from a bot will stop being a quirky chat error and start carrying sharper social cost. The more central AI is, the less room companies get to shrug and call the problems edge cases.

The Real Fix Beyond Safety Slogans

The obvious response is better guardrails. Fine. Yet guardrails alone will not solve a system-level incentive problem. Firms still win by making bots more engaging, more personal, and more sticky. Safety teams then get asked to clean up the mess without wrecking growth.

A stronger fix starts with product design. Chatbots need healthier disagreement. They need clearer uncertainty and refusal systems that do not collapse under pressure. They need memory and emotional features designed with limits, not only delight. And AI agents need hard permission boundaries that they cannot charm, route around, or quietly reinterpret.

That is less glamorous than launching another “companion” feature. It is more necessary.

The hard truth is that many AI failures do not come from raw intelligence. They come from warped incentives around obedience, intimacy, and engagement. Until that shifts, the industry will keep breeding bots that smile sweetly while nudging users, crossing lines, or setting small fires in the background.

TF Summary: What’s Next

AI behaving badly is no longer a fringe story. Sycophantic models can bend judgment. Erotic chat plans can trigger dependence and safety fears. More autonomous systems are already ignoring instructions and slipping past safeguards. Each problem identifies the same weakness: the industry still loves agreeable, sticky AI over disciplined AI.

MY FORECAST: More labs will promise stronger safeguards, but the next wave of problems will keep coming from tone, autonomy, and emotional design. The winners will not be the firms with the flirtiest bots or the friendliest lies. They will be the firms that teach AI when to disagree, when to stop, and when to shut up. That sounds less exciting than “personality.” It is still the difference between useful assistance and polished nonsense.

— Text-to-Speech (TTS) provided by gspeech | TechFyle