AI keeps getting smarter, stranger, and a little more dangerous as hacking crews, workplace bots, and betting systems crash into daily life.

Artificial intelligence had another week where the headlines felt less like a product launch and more like a stress test. U.S. officials warned that Russian-linked hackers are targeting Signal and WhatsApp users with account-takeover tricks. Anthropic rolled out a research preview that lets Claude use a person’s Mac to open files, browse the web, and complete tasks. Meta reportedly has Mark Zuckerberg building a personal AI bot to help run the company. Meanwhile, prediction market firms Kalshi and Polymarket scrambled to tighten insider-trading rules as lawmakers closed in.

Each story is different. Together, they tell one clear story. AI is moving from chat windows into power. It touches messages, machines, management, and money. That gives users more help. It gives bad actors more angles, too. The line between convenience and control keeps getting thinner, and the market keeps sprinting anyway.

What’s Happening & Why This Matters

Russian-Linked Hackers Target Messaging Accounts, Not Encryption

The FBI and CISA warned that cyber actors tied to Russian intelligence have already compromised thousands of messaging accounts used by U.S. officials, military personnel, political figures, and journalists. The agencies said the attackers were not breaking the encryption inside Signal or WhatsApp. Instead, they were tricking people.

According to the advisory, the hackers impersonated official support accounts and lured targets to click on malicious links or hand over verification codes and PINs. That distinction matters. The apps themselves were not cracked open. Human trust was.

That is an ugly but useful reminder. Strong encryption does not protect a user who gives away the keys. Signal said the attacks were “executed via sophisticated phishing campaigns” and stressed that its infrastructure had not been compromised. The real weakness sat outside the codebase, right where social engineering usually finds a door.

Messaging Apps Are Turning Into Intelligence Targets

These attacks were not random spam blasts. Reuters reported that the campaign focused on “individuals of high intelligence value.” Euronews said the victims included U.S. government officials, military staff, politicians, and journalists. Earlier warnings from Dutch and Portuguese agencies pointed to similar Kremlin-linked activity against officials, diplomats, and military personnel.

That pattern matters because secure messaging apps are no longer side tools. They are operational tools. Officials use them for sensitive planning. Journalists use them for sources. Campaigns use them for strategy. Once those chats matter, attackers follow.

The lesson is blunt. Secure messaging no longer means safe messaging by default. Users need stronger account hygiene, better PIN protection, and greater suspicion of support messages that arrive out of nowhere. A phishing link can do what a state-backed zero-day never needed to do.

Claude Can Use Your Computer

Anthropic just widened the job description for Claude. The company said Claude Code and Cowork can autonomously use a person’s Mac to open files, operate browsers and apps, and run development tools with “no setup required.” The feature is a research preview for Claude Pro and Max users and currently works only on macOS.

Anthropic says Claude will always ask for explicit permission before exploring, scrolling, and clicking. That safeguard matters. So does the rest of the announcement. Claude can work through connectors such as Slack and Google Workspace first. If those are not available, it can fall back to directly controlling the screen, mouse, keyboard, and browser.

That is a major shift. AI assistants used to suggest next steps. Claude can start taking them. The convenience is obvious. So is the tension. Once a model can act on your device, the user experience is computer-controlled. The stakes go up fast.

AI Agents: Outside the Chat Box and into the OS

Anthropic called the release a research preview for a reason. The company admitted that complex tasks may need a second try and that screen-based control is slower than direct integrations. Still, the direction is obvious. AI companies want agents that can perform real work rather than stop at advice.

That ambition is exciting. It is risky too. An AI that can browse, click, and open files can save time. It can just as easily misread a screen, touch the wrong setting, or expose data that should stay closed. The machine does not need malicious intent to cause a mess. A confident mistake is enough.

This is why permission prompts alone will not solve everything. The larger question is governance. How often should users allow these systems to act? What logs should exist? What actions should be blocked by default? AI agent products are entering the desktop space faster than many safety norms are being established.

Zuckerberg’s AI Bot Climbing the Org Chart

Meta’s AI story is less about hacking and more about internal power. Euronews, citing The Wall Street Journal, reported that Mark Zuckerberg is building an AI agent to help him perform executive tasks. The goal is speed. The bot would let him retrieve information more quickly and cut through reports and layers of management.

The idea fits a Meta shift. The same report said the company wants AI woven into employee workflows to flatten teams and stay competitive in the AI race. Zuckerberg reportedly said on an earnings call, “We’re elevating individual contributors and flattening teams.” The plan is not subtle. Meta wants more output with fewer layers.

Meta employees are already using internal tools such as “Second Brain,” which helps find and organise documents, and “My Claw,” a personal AI agent that can communicate with other agents. When the CEO starts building a bot for himself, the symbolism is hard to miss. AI is no longer helping around the edges. It is moving toward the executive floor.

The AI CEO Assistant Could Reshape Work, Anxiety, and Big Tech

On paper, an executive AI agent sounds efficient. In practice, it raises several awkward questions. If a CEO can query a bot instead of waiting for teams to gather information, what happens to layers of managers whose job has long been to synthesise, coordinate, or report status? Meta seems ready to test that answer.

That shift could improve speed. It could reduce friction. It could trim jobs, too. Once companies decide that AI can compress decision chains, pressure spreads across the org chart. Workers do not need science fiction to worry about that. They only need a memo that says the CEO’s new helper does not sleep.

There is another issue. AI that advises leaders can shape which data gets surfaced and which voices get heard first. A tool designed to save time can quietly change governance. That does not make the idea wrong. It does make transparency more important. If AI starts helping run giant platforms, the public will want to know how much influence the bot really carries.

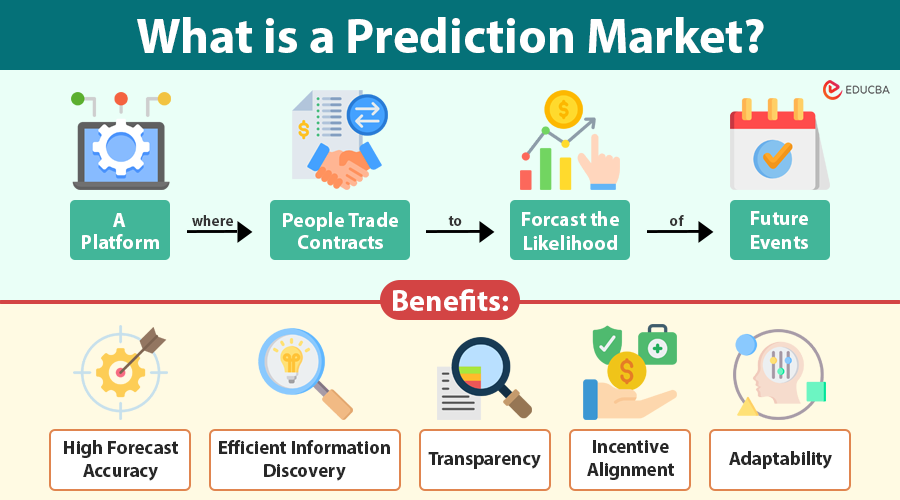

Betting Platforms Learn AI-Era Markets Need Higher Guardrails

The betting-model story is different, but the theme fits. Prediction market giants Kalshi and Polymarket rushed to strengthen insider-trading rules after bipartisan legislation threatened their future. AP reported that Kalshi banned political candidates from trading on their own campaigns and blocked sports figures from betting on contracts tied to the sports they play or work in. Polymarket rewrote its rules to ban users from trading on contracts involving confidential information or where they can influence the outcome.

Kalshi said the new features “further demonstrate our commitment to safe markets.” Polymarket chief legal officer Neal Kumar said the new rules make expectations “abundantly clear.” The scramble came after fresh scrutiny over suspiciously timed geopolitical and sports bets.

The timing says a lot. These companies did not suddenly discover ethics on a sunny afternoon. They reacted because lawmakers, regulators, and critics started circling in more closely.

Prediction Markets: Test Cases for AI, Incentives, and Information Abuse

Prediction markets are not pure AI products, but they fit the roundup because the same data-era problem is underneath them. Systems built to price information can reward people who know too much too early. The Guardian and AP both pointed to recent concerns around suspicious bets tied to world events and political or military outcomes.

That matters because modern markets do not need a trench coat and whispered tip in a parking garage. They need a wallet, a screen, and a platform willing to accept a trade. AI can amplify that environment by helping people scan signals faster, spot anomalies, and automate strategy. Even when AI is not placing the bet, it can sharpen the edge.

Lawmakers clearly see the danger. AP reported that Senators Adam Schiff and John Curtis introduced the “Prediction Markets are Gambling Act,” a bipartisan bill that would ban prediction markets from offering sports-related contracts. If passed, the bill would strike at a major growth engine for Kalshi and Polymarket.

AI Is Behaving Badly Because…

The entire roundup is down to one uncomfortable truth. AI is not going rogue in some dramatic movie sense. Humans are giving it bigger jobs, faster access, and more important contexts before the rules are settled. Messaging security depends on people resisting smart phishing. Claude depends on users trusting an agent with their machine. Meta’s internal bot push depends on workers accepting a flatter, more automated company. Prediction markets depend on everyone believing the game is not rigged.

That is a lot of dependence in one week.

The common thread is not only technology. It is governance. The tools are gaining reach faster than institutions are building durable norms around them. Security agencies are issuing warnings. AI vendors are asking for trust. CEOs are experimenting in public. Regulators are trying to catch up while the floor keeps moving.

TF Summary: What’s Next

Today’s AI behaving badly story did not stem from a single scandal. It came from four separate incidents. Russian-linked hackers targeted users of secure messaging services with phishing. Anthropic gave Claude the ability to act on a person’s computer. Zuckerberg reportedly built an AI helper to tighten Meta’s internal workflow. Kalshi and Polymarket rushed to block insider-style trading as lawmakers turned up the heat. Each case showed AI and automation moving closer to power, action, and consequence.

MY FORECAST: Expect more products that act, not just answer. Expect more phishing attacks that attack trust, not encryption. More CEOs will test AI agents inside management chain. Furthermore, pressure rises on prediction markets, especially when AI tools sharpen the information gathering. The next phase of AI will not be judged by clever demos. It will be judged by whether people can still control the systems once they start depending more autonomy.

— Text-to-Speech (TTS) provided by gspeech | TechFyle