Europe wanted to avoid “chat control.” Instead, it may have handed predators a quieter internet.

A fresh political mess in Europe has opened a dangerous gap in the fight against child sexual abuse material, or CSAM, online. A temporary EU legal carve-out that allowed major platforms to scan for child abuse content has expired. The European Parliament did not extend it before the deadline. That has triggered a fierce response from Google, Meta, Snap, and Microsoft, which say the lapse weakens child protection and creates legal confusion about detection tools already in place.

That sounds like a policy dispute. It is bigger than that. The expired rule sat right in the middle of one of the internet’s hardest arguments: how to protect children online without sliding into mass surveillance. Europe has made the argument messier. Child-safety groups say abuse reports may fall sharply. Privacy advocates say the scanning model was already too invasive. Big tech firms are warning about lost visibility. Meanwhile, predators are not exactly pausing for a legal review.

What’s Happening & Why This Matters

The Expiration of a Temporary CSAM Scanning Rule

The immediate problem is simple. A temporary EU measure that let online platforms scan messages and content for child sexual abuse indicators expired on 3 April 2026. Lawmakers did not extend it in time.

That rule had operated as a carve-out from the EU’s privacy framework. It gave companies a legal basis to use automated detection tools to spot known child sexual abuse material, grooming patterns, and sextortion signals. Without that carve-out, the legal ground under those scanning systems has become much shakier.

That is what triggered the backlash.

In a joint statement, Google, Meta, Snap, and Microsoft said they were “disappointed by this irresponsible failure to reach an agreement to maintain established efforts to protect children online.” Big tech firms do not usually line up shoulder-to-shoulder unless the issue is operationally serious. In this case, the firms say the lapse creates both a safety and a compliance problem.

The awkward part is brutal. Platforms still face pressure to remove illegal material. The legal basis for scanning to find some of that material is weaker than it was a week ago.

That is not a clean policy setup. That is a contradiction with the victims in the middle.

What Child-Safety Groups Say

The strongest warning from child-protection experts is not abstract. They say detection gaps have consequences almost immediately.

Experts pointed to a similar legal disruption in 2021, when reports from EU-based accounts to the National Centre for Missing and Exploited Children, or NCMEC, dropped by 58% over 18 weeks. That is not a rounding error. That is a collapse in visibility.

John Shehan, vice-president at NCMEC, put the point plainly: “When detection tools are disrupted, we lose visibility that directly impacts our ability to find and protect child sexual abuse victims.” He added, “When detection goes dark, the abuse doesn’t stop.”

That is the line lawmakers need tattooed onto the debate.

The internet is not safer because the law is cleaner on paper. Abuse networks do not disappear because a legislative compromise stalls. What disappears first is visibility. Once the reporting pipeline weakens, the bad actors keep moving while the evidence trail gets thinner.

That is why a lapse is significant. A lot of internet policy sounds theoretical until one missed vote changes what platforms can detect. Then the theory gets children hurt.

Privacy Concerns vs. the Abuse Problem

This is where the argument gets ugly.

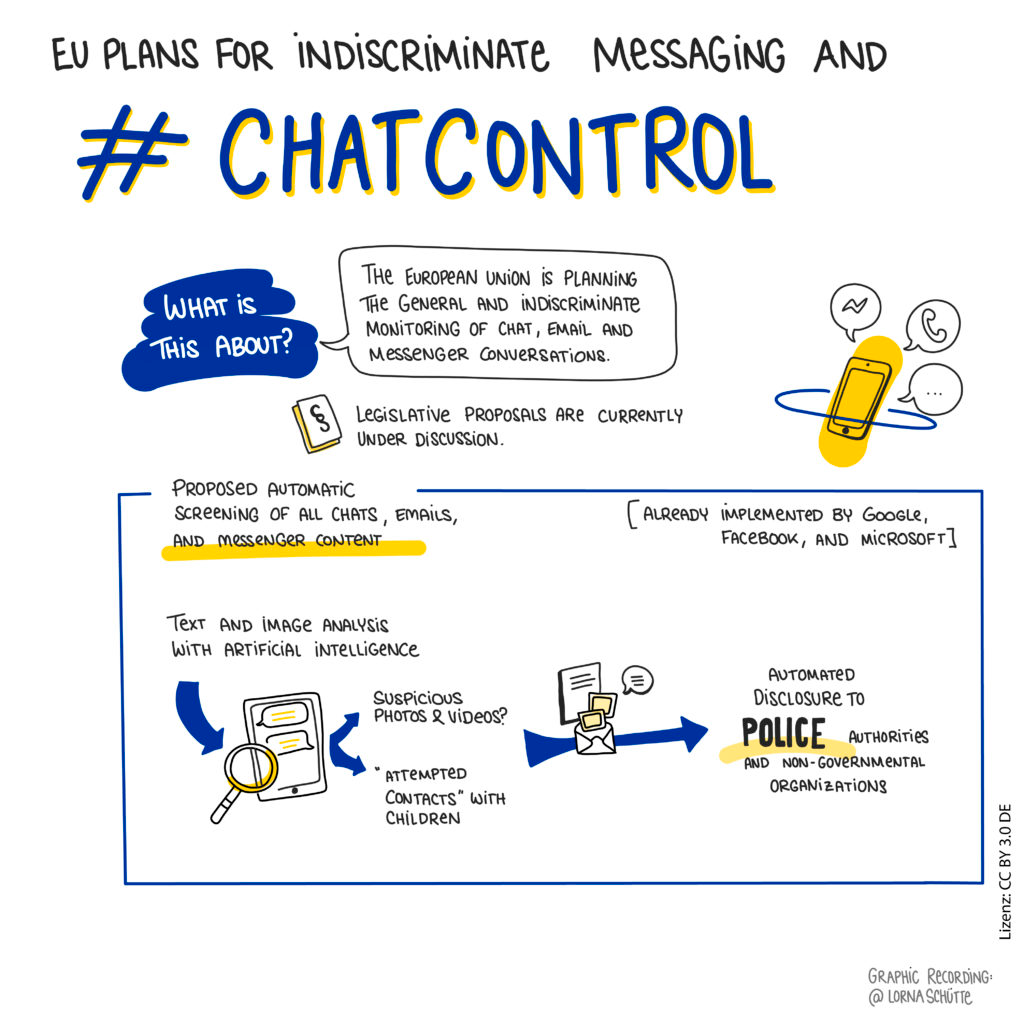

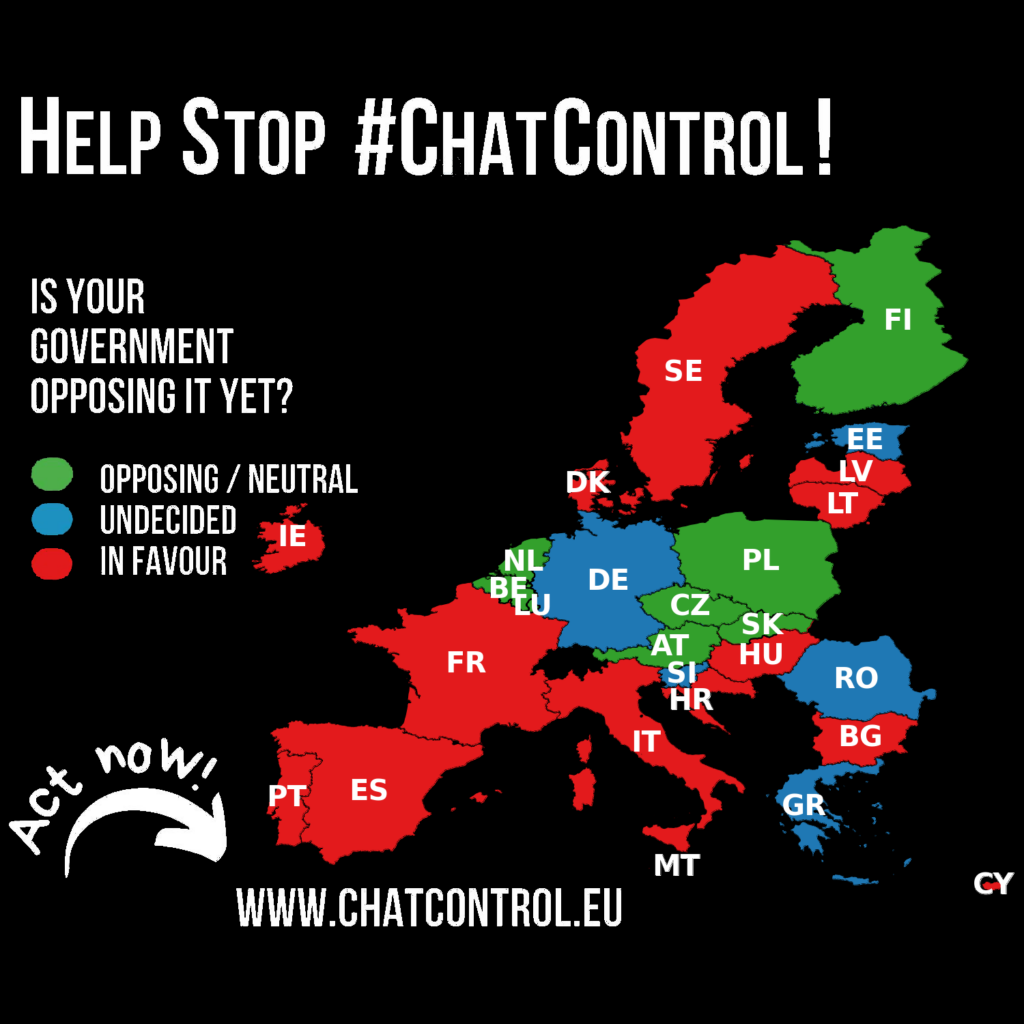

Privacy advocates have spent years warning that automated scanning for abusive content can drift toward surveillance. Some campaigners describe the idea as “chat control.” Their concern is not unfounded. Once governments and platforms create a framework for scanning private communications, people worry that the same machinery could expand into other categories later.

That fear has political force in Europe, where privacy rights carry more legal and cultural weight than in many other regions. Some lawmakers clearly preferred to let the temporary rule lapse rather than bless another extension without a permanent settlement.

Still, the child-safety side has a strong case too. Hannah Swirsky of the Internet Watch Foundation said, “Blocking CSAM is not an evasion of privacy. Free speech does not include sexual abuse of children.” That is hard to argue with unless someone is more committed to theoretical purity than to the ugly facts of online exploitation.

The deeper problem is that both sides are arguing over a real risk.

Yes, privacy erosion is dangerous. Yes, child sexual abuse online is dangerous. The EU has not solved the tension. The EU has simply moved from one imperfect arrangement to a riskier gap.

That is not balanced. That is drift.

A Targeted Technology That Critics Often Admit

One reason this debate keeps overheating is that people imagine the scanning tools in very different ways.

Critics often picture a giant machine peering into everyone’s messages like a digital police officer. Child-safety groups describe something narrower. They say the most common systems rely on hash matching and pattern detection, not some lurid dragnet combing through everyday family photos.

A confirmed abusive image can be turned into a unique digital fingerprint, or hash. Platforms can then compare uploads against those hashes and automatically block exact matches. Similar tools can detect known exploitation patterns and language tied to grooming or sextortion.

Emily Slifer of Thorn said the technology can distinguish illegal abuse material from ordinary or consensual content and that the systems do not store extra personal data in the way critics often imply.

That does not end the privacy debate. It does strip away some of the cartoon version of it.

The real argument is not whether every scanning tool is mass surveillance by default. The real argument is whether Europe can build a durable legal framework that keeps targeted detection alive without opening the door to wider abuse of the same technical infrastructure.

That is a harder job than shouting “privacy” or “safety” across a chamber.

Europe’s Reckless Delay

At some point, the process stops being principled and is careless.

Negotiations over a permanent child sexual abuse regulation have dragged on for years. The European Parliament says it is prioritising work on a longer-term framework. Fine. The internet does not pause while institutions workshop the wording.

That is what makes the lapse so politically damaging. Europe had years to avoid the cliff edge. It reached the cliff edge anyway.

The companies are angry. Child safety groups are angry. Victim advocates are angry. Even people who dislike big tech are left staring at the same uncomfortable truth: the companies may be opportunistic messengers, but they are not wrong that a gap has opened.

The blunt version writes itself. If lawmakers knew a lapse could lead to fewer abuse reports and still let the carve-out expire without a replacement, that is less like thoughtful governance and more like bureaucratic self-sabotage.

The phrase “irresponsible failure” is sharp. It is not absurd.

A Fight Not Confined To Europe

Online abuse does not respect borders.

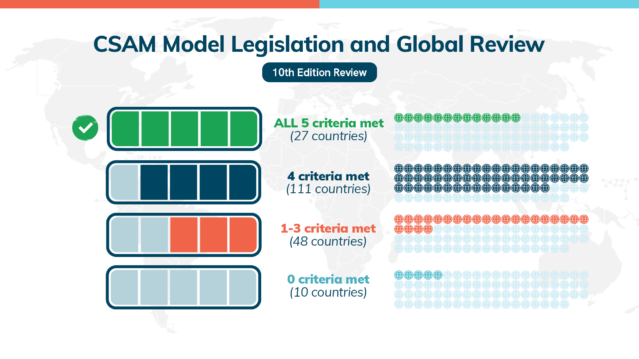

NCMEC said about 90% of the 21.3 million reports it received in 2025 were tied to countries outside the United States. Those reports involved more than 61.8 million images, videos, and other files suspected of being linked to child abuse.

That means Europe’s legal choices do not stay in Europe.

A predator can operate in one country, target a child in another, use a service based in a third, and route content across multiple jurisdictions before anyone even knows the crime happened. If detection weakens inside a major regulatory bloc like the EU, the ripple effects spread outward. Cross-border investigations get harder. Reporting gets thinner. Abuse networks get more room.

That is why Shehan warned that offenders anywhere in the world could gain “unfettered access to minors in Europe” under the current uncertainty.

So yes, this is an EU policy story. It is also a global child-safety story.

When one major jurisdiction pulls legal certainty away from detection systems, everyone else gets more exposed.

TF Summary: What’s Next

Europe’s temporary legal basis for child sexual abuse detection has expired, and lawmakers failed to extend it before the deadline. That has left Google, Meta, Snap, and Microsoft furious, child-safety groups alarmed, and privacy campaigners unconvinced. The biggest risk is not theoretical. When detection tools go dark, reports can fall fast, visibility collapses, and victims are harder to find.

MY FORECAST: The EU will be forced back to the table faster than it wants. The political cost of doing nothing is too high, especially if report volumes dip again. The hard part will be the same as before: building a permanent framework that protects children without triggering a surveillance backlash. Europe tried to avoid one bad outcome and may have walked straight into another. That is not clever policymaking. That is what happens when a digital-age crisis meets old-school legislative drift.

— Text-to-Speech (TTS) provided by gspeech | TechFyle